“I think in terms of the AI, the biggest challenge I think for a lot of my customers is memory. Memory actually there’s no relief as far as I know when I talked to the you know, only three key players, two of them I talked to very frequently, and then they told me, ‘Lip-Bu, there’s no relief until 2028.’”

Lip-Bu Tan, Intel CEO, February 2026 (Cisco AI Summit)

Introduction

Memory has emerged as one of the hottest sectors in technology, driven by the explosive growth in AI infrastructure build-out. Memory has become a critical bottleneck in AI systems across both training and inference. With demand rising far faster than supply can respond, memory prices have risen sharply, driving strong growth in revenues and profits across the memory industry. The three main players, SK Hynix, Samsung and Micron, have all benefited tremendously, with their share prices rising roughly 3-4x over the past year. All three have also sold out their entire AI GPU memory production for 2026, and the overall memory market is now forecast to grow by 134%, to US$552 billion (TrendForce forecast), by the end of the year.

“They’ve seen boom and bust 10 times. That’s a lot of layers of scar tissue. During the boom times, it looks like everything is going to be great forever. Then the crash happens and they’re desperately trying to avoid bankruptcy.”

Elon Musk, February 2026 (Dwarkesh Patel Podcast)

Despite the strong industry tailwinds, however, both memory producers and investors are still haunted by the boom-and-bust cycles of the past. The key question now is whether history will repeat once again, or whether this is a new normal, with the AI secular trend sustaining elevated economics and ushering in a memory golden age.

In this note, we first provide a market overview of the memory industry, highlighting different memory types and company market shares. Next, we examine why memory is essential to AI training and inference and how it has emerged as a major bottleneck. We then outline some steps taken to mitigate these constraints. We next present a case study of SK Hynix, focusing on its recent performance and future growth. We also discuss the spillover effects from memory shortages on other sectors, including smartphones and PCs. Finally, we provide a market outlook and discuss the sector risks.

The memory landscape

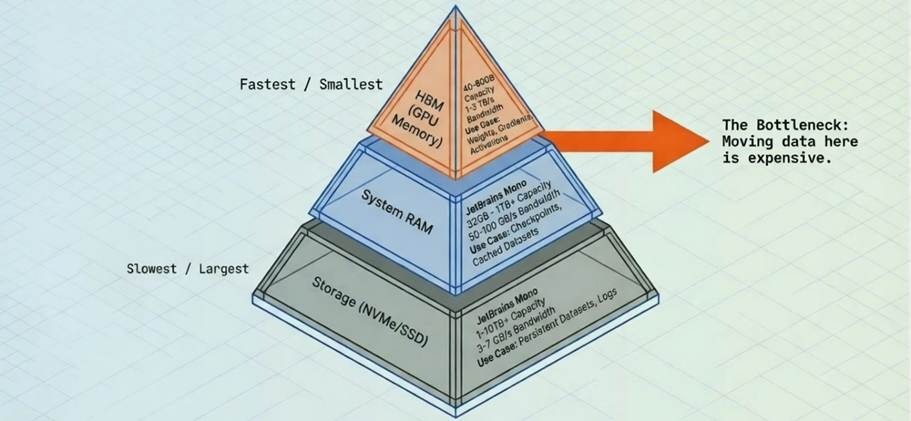

Different types of memory can be viewed as a hierarchy, often illustrated as a pyramid (Figure 1). At the top sits the fastest memory, with the highest bandwidth, where bandwidth refers to how much data can be moved per second and is typically measured in GB/s or TB/s. However, this top-tier memory also has the highest cost and the lowest capacity, where capacity refers to how much data it can hold and is typically measured in GB or TB. As you move down the pyramid, bandwidth falls while capacity rises and cost per bit declines.

Figure 1: The Memory Pyramid Hierarchy (Simplified)

Source: Sam Mokhtari

The memory market is dominated by two categories: DRAM and NAND flash. DRAM is the principal memory technology used for active computing workloads and is much faster than NAND, but it is also more expensive per bit and typically offers less capacity. NAND, by contrast, is used primarily for storage applications such as SSDs and offers much higher capacity at lower cost, but with much lower speed.

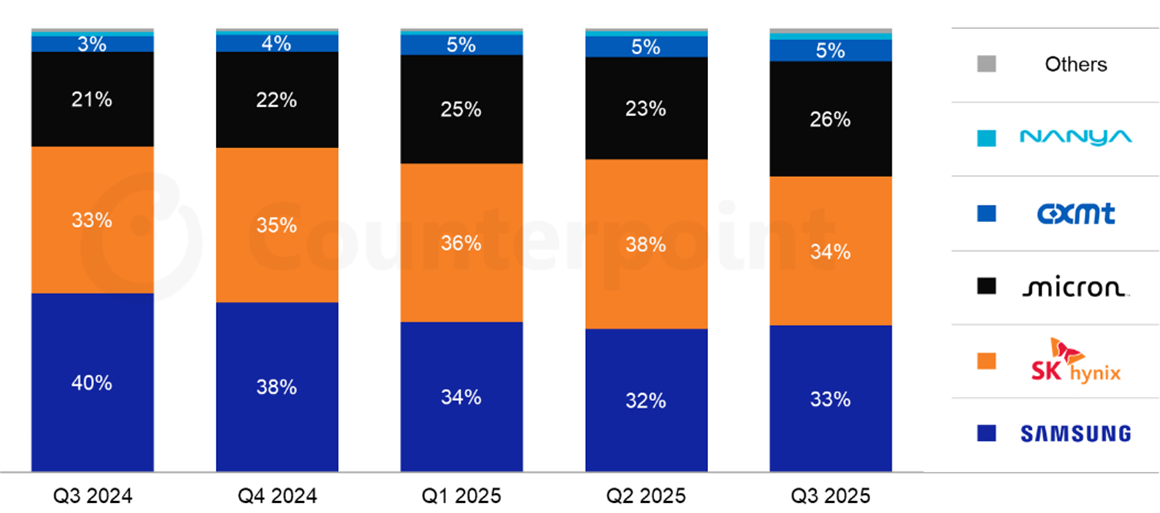

Figure 2: Global DRAM Market Share by Revenue

Source: Counterpoint Research

Within DRAM, there are several sub-types. Conventional DRAM typically serves as CPU-attached system memory, while High-Bandwidth Memory (HBM) is a specialised variant of DRAM used in AI GPUs. HBM provides much higher bandwidth than conventional DRAM and is essential for keeping GPUs fed with data during active computation. SK Hynix is currently the leader in HBM and a major supplier to Nvidia (Figure 3).

Figure 3: Global HBM Market Share by Revenue

Source: Counterpoint Research

The memory bottleneck

The reason memory has become such a critical focus in AI is that it is now a key bottleneck to further progress, due to both bandwidth and capacity constraints.

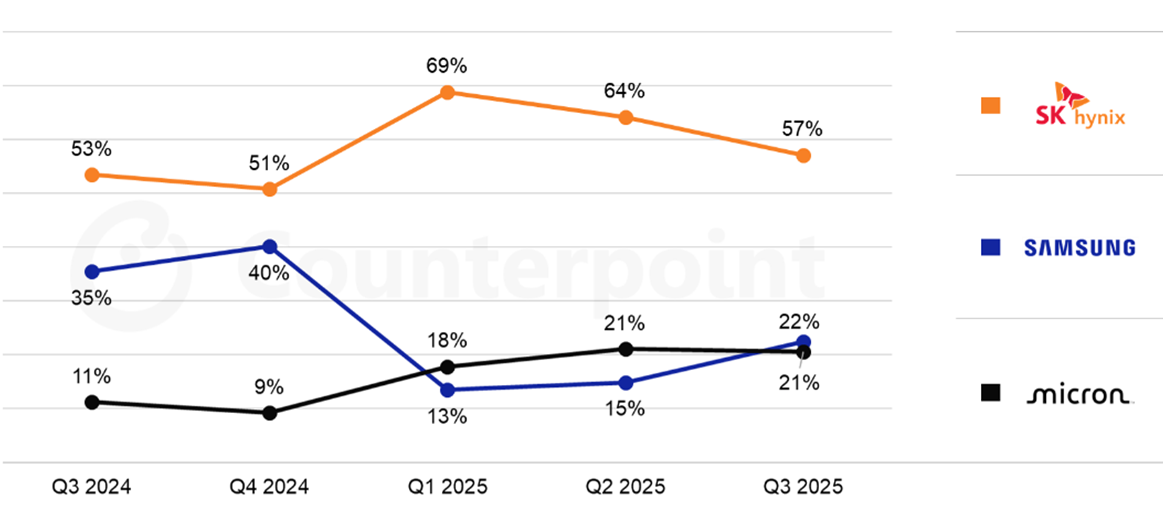

The bandwidth bottleneck stems from GPU compute power having improved at a faster rate than memory bandwidth over the past decades. This divergence has compounded considerably, leading to a significant gap. This is illustrated in the chart below, where compute speed (hardware FLOPS) outgrew memory speed (DRAM bandwidth) by a factor of 600x over a 20-year period (Figure 4).

In practice, this means GPUs frequently finish their calculations and then sit idle waiting for the next batch of data to arrive from memory. The result is expensive GPUs left underutilised and slower model training. The bandwidth bottleneck is also known as the “memory wall,” a term originally coined in 1995 by William Wulf and Sally McKee to describe the growing gap between processor speeds and memory performance. While it originally described the growing gap between processor speeds and memory performance, the term is now used more broadly to encompass capacity constraints as well.

Figure 4: The memory wall

Source: Gholami et al., 2024, “AI and Memory Wall”

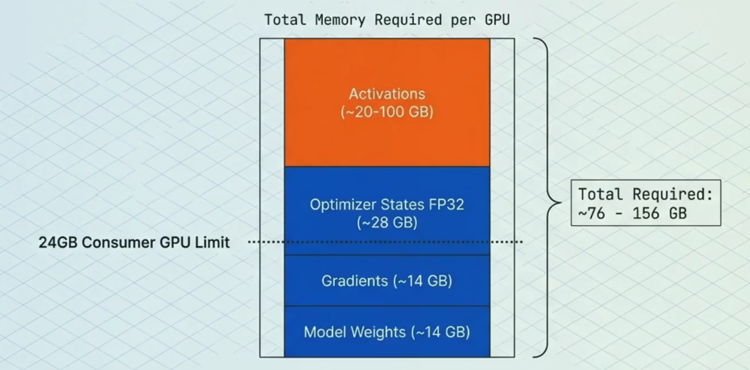

The capacity bottleneck has also become a major limiting factor. Training large models requires storing not just the parameters (the core learned weights), but also gradients (signals for updating weights), optimiser states (extra data used to enhance and stabilise updates) and activations (temporary intermediate results used to compute gradients) (Figure 5). Therefore, limited memory capacity acts as a bottleneck for building more powerful models. Similarly, it can also limit inference performance, as longer context windows exhaust available GPU memory.

Figure 5: Memory breakdown example of a 7 billion parameter model

Source: Sam Mokhtari

Solving the bottlenecks

A range of optimisations have been adopted to address these bottlenecks. This includes “Precision Reduction,” which entails storing data in GPU memory in lower precision formats (e.g. FP32 vs FP16), reducing both memory capacity and bandwidth required. A further optimisation is the use of “Parallelism”, which allows the memory that would normally sit on a single GPU to be split across multiple GPUs, enabling larger models.

A third and particularly consequential development is “Key-Value (KV) cache offloading.” KV cache is a data structure created during inference and grows linearly with prompt length. For use cases such as multi-turn conversations, deep research and code generation, limited and costly GPU memory becomes a significant constraint, especially when the KV cache must be retained in memory for extended periods. KV cache offloading solves this by progressively moving less-active portions of the cache from the GPU’s limited HBM first to CPU DRAM (system memory) and then to SSD storage as the cache grows. This hierarchical approach eases the capacity bottleneck for longer contexts without requiring additional GPUs, while keeping the most frequently used (“hot”) data in the fastest memory tier. The surge in inference workloads and wider adoption of KV cache offloading have, in turn, driven a sharp rise in demand for conventional DRAM and NAND.

Beyond these system-level techniques, architectural innovations are also reducing memory intensity per unit of AI capability. Mixture of Experts (MoE) models, such as those used by DeepSeek and Mistral, activate only a fraction of the model’s total parameters on any given forward pass. This means a model with hundreds of billions of parameters may only require memory bandwidth for a small subset during each computation, significantly easing both bandwidth and capacity demands relative to a dense model of equivalent capability. Similarly, distillation, namely the process of training smaller, more efficient models to replicate the outputs of larger ones, is producing compact models that deliver strong performance with a fraction of the memory footprint. Together, these developments mean that useful AI capability is growing faster than raw memory consumption, which has important implications for the demand outlook.

On the hardware side, Processing-in-Memory (PIM) represents an emerging approach that differs fundamentally from the software-level optimisations above. Rather than accepting the separation between compute and memory and working around it, PIM integrates computational capabilities directly into the memory itself, reducing the need to move data back and forth. SK Hynix (see next section) showcased several PIM-related technologies at CES 2026, including an accelerator card prototype specialised for large language models and a Compute-using-DRAM product. The HBM4 standard itself is a stepping stone in this direction, introducing a logic base die manufactured using a logic process rather than a traditional DRAM process, enabling basic computational tasks to be performed on-die. Industry experts view this as a pivotal early step toward fuller PIM integration, with specialised AI processing units expected to be embedded directly into HBM logic dies by 2027.

Although these optimisations help reduce bottlenecks, they still come with trade-offs, such as potential accuracy losses or added complexity. And still, the memory bottleneck persists, as the appetite for higher bandwidth speeds and more capacity remains insatiable. While the memory suppliers are investing heavily to meet these demands, it takes time to respond, as bringing new state of the art fabrication plants (fabs) online is typically a five-year period when factoring in the time for construction, tool installation and yield ramp. As such, there does not appear to be any near-term solutions to fully solving the current bottlenecks.

SK Hynix case study

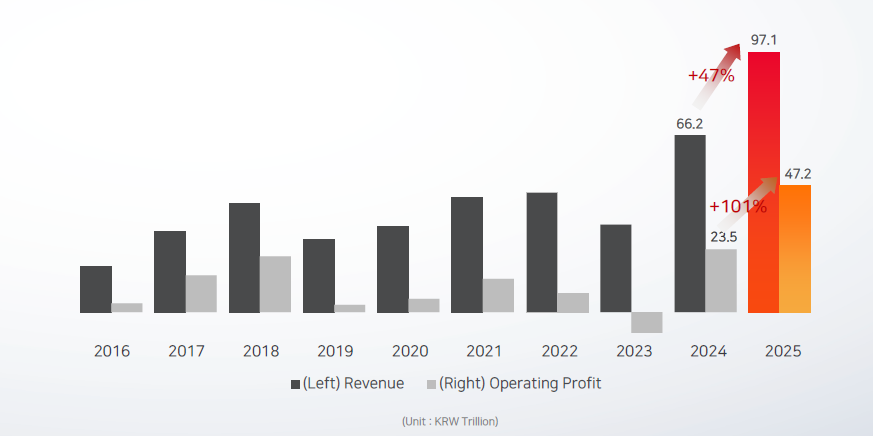

SK Hynix saw strong growth in FY2025, with overall revenue up 47% year-over-year (Figure 6) and HBM revenue more than doubling and making a significant contribution. There was also strong growth in conventional DRAM and NAND, driven to a large extent by growth in inference and the use of KV cache offloading. Operating profit for the year grew 101%, driven largely by price increases.

Figure 6: SK Hynix revenue and profit growth

Source: SK Hynix

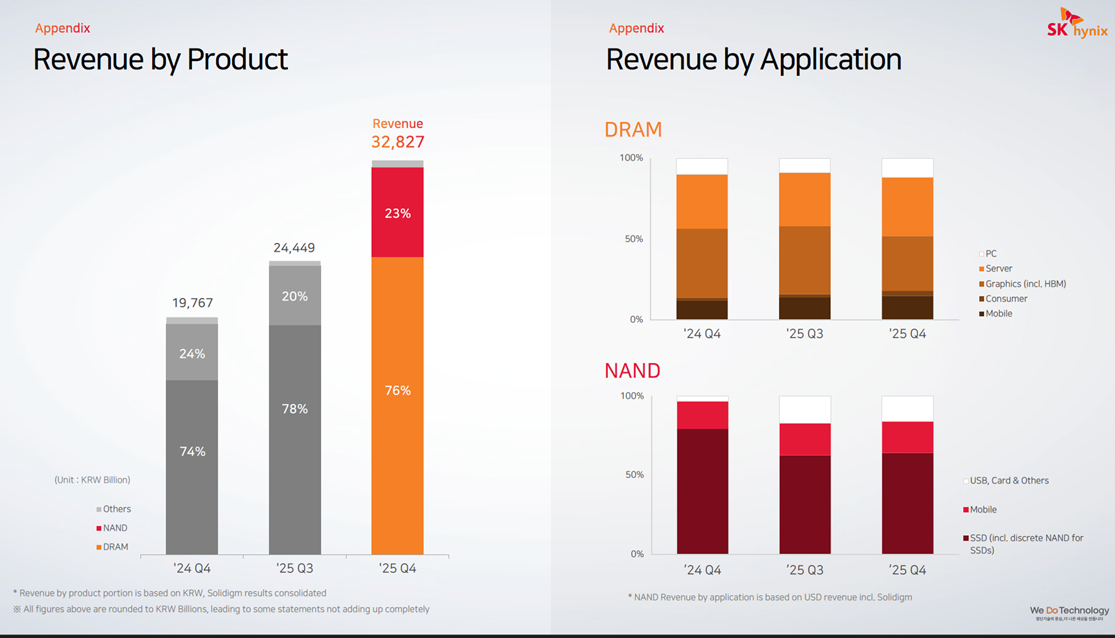

Zooming in on the Q4 2025 results (Figure 7), there was particularly strong acceleration here, with revenue up 66% year-over-year and up 34% sequentially quarter-over-quarter. The Q4 sequential growth was driven largely by DRAM average selling price (ASP) increasing in the mid-20s%, and to a lesser extent by DRAM shipment growth (bit growth), which only grew in the low single digits. Shipment growth was driven by both HBM3E products and DDR5 for servers, where shipment of high-density DDR5 modules were up by roughly 50% quarter-over-quarter. NAND also saw strong revenue growth, with sequential ASP growth in the low 30s% and shipments up roughly 10%.

Figure 7: SK Hynix revenue by product and application

Source: SK Hynix

For FY26, SK Hynix has already secured full customer demand for its entire DRAM and NAND production and remains capacity constrained. DRAM bit shipments are guided to grow over 20% and NAND is guided to grow in the high teens%. Its new Cheongju M15X fab is currently on track to begin mass HBM production in H1 2026 and FY26 CAPEX is also set to increase considerably as it continues to invest in new fabs to expand production capacity. SK Hynix is also expected to maintain its leadership in HBM3E whilst simultaneously ramping up its next-generation GPU memory, HBM4, which started mass production in September 2025. HBM4 can process over 2 TB/s versus 1.2 TB/s for HBM3E and has a power efficiency improvement of more than 40%. Overall, analysts still expect HBM3E to make up two-thirds of total HBM shipments in 2026, with SK Hynix maintaining its market leading position. Analysts also expect SK Hynix to achieve roughly 70% market share for HBM4 in Nvidia’s next-generation Rubin platform.

Spillover effects in other sectors

“The AI-driven growth in the data centre has led to a surge in demand for memory and storage. Micron has made the difficult decision to exit the Crucial consumer business in order to improve supply and support for our larger, strategic customers in faster-growing segments.”

Sumit Sadana, Micron EVP and Chief Business Officer (December 2025)

“PCs and mobile devices are expected to see short-term shipment adjustments due to rising component costs and weakened consumer sentiment. Memory content per device is expected to grow at a slower pace due to price increases and supply constraints.”

Song Hyun Jong, SK Hynix President (Q4 2025)

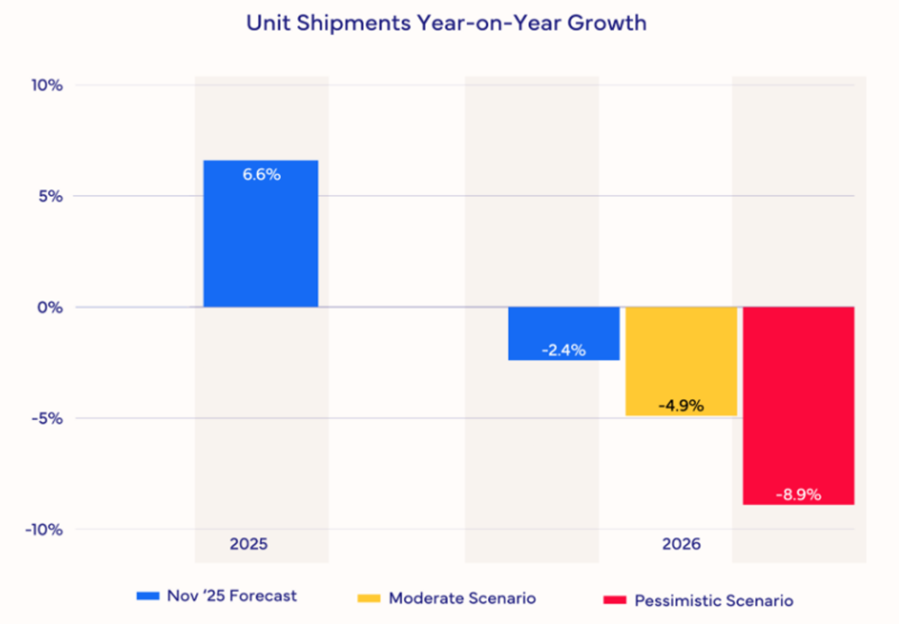

The memory supply-demand imbalance is also impacting other sectors, including smartphones and PCs. Memory manufacturers are prioritising lucrative AI-grade DRAM over traditional DRAM, creating pricing pressure across consumer electronics. As a result, IDC estimates that the smartphone market will shrink 2.9% in terms of shipments year-over-year in 2026. It also expects that prices will have to rise significantly or specifications will have to be cut, or both. Furthermore, it expects the lower-end smartphones with thin margins to be impacted most severely. For high-end smartphones like Apple and Samsung there is also expected to be pressure, though they will likely be more insulated due to long-term supply contracts and market power. For the PC market, IDC expects even deeper disruption, with shipments forecasted to fall 4.9% (Figure 8). IDC also expects PC vendors with larger volumes to be better positioned and to take share away from the smaller, more vulnerable brands.

Figure 8: PC Market Forecast Scenarios

Source: IDC

Short to medium term outlook

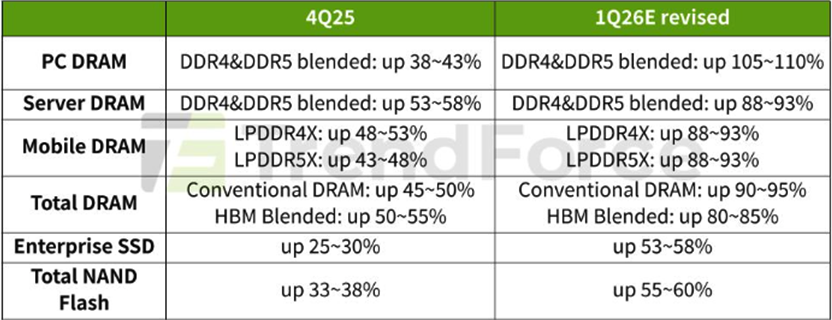

In the short- to medium-term, market forecasts for the memory sector differ materially, largely reflecting the high uncertainty around pricing levels. Additionally, given how rapidly the space is evolving, forecasts are frequently being revised. In its most recent forecast, TrendForce revised its numbers upward significantly and now expects Q1 2026 conventional DRAM contract prices to rise 90-95% and blended HBM price to be up 80-85%, quarter-over-quarter (Figure 9).

Figure 9: Memory Price Growth Forecasts 4Q25-1Q26

Source: TrendForce

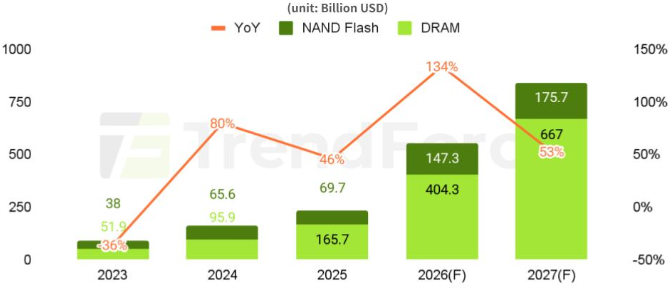

For the overall memory market (DRAM + NAND) in 2026, TrendForce forecasts 134% revenue growth, reaching a market size of US$551.6 billion (Figure 10). For 2027, it forecasts 53% growth, reaching a market size of US$842.7 billion.

Figure 10: DRAM and NAND Flash Revenue Projections

Source: TrendForce

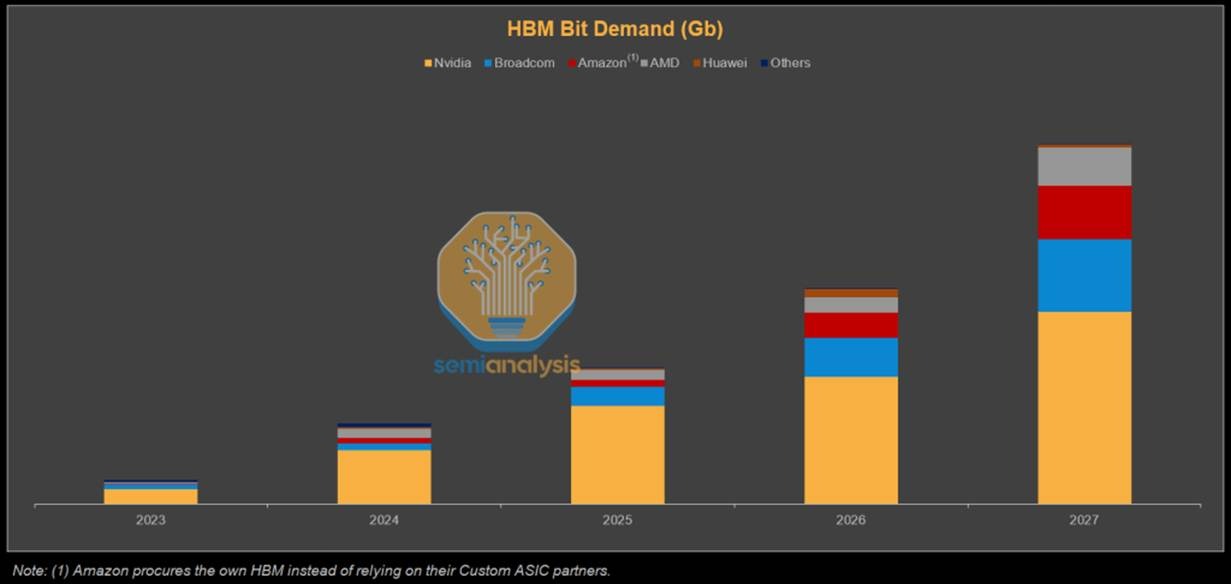

On HBM bit shipments, SemiAnalysis forecasts that Nvidia will still dominate demand, while Broadcom, Amazon and AMD are also expected to grow their shares significantly (Figure 11).

Figure 11: HBM Bit (shipments) Demand Forecast

Source: SemiAnalysis

Longer term supply outlook

“I’d say my biggest concern actually is memory. The path to creating logic chips is more obvious than the path to having sufficient memory to support logic chips. That’s why you see DDR prices going ballistic.”

“They’re building fabs as fast as they can. So is Samsung. They’re pedal to the metal. They’re going balls to the wall, as fast as they can. It’s still not fast enough.”

Elon Musk, February 2026 (Dwarkesh Patel Podcast)

While memory market forecasts for 2028 and beyond are even more uncertain, if AI proves to be a secular trend that becomes integrated across all facets of society, this should sustain strong memory demand over the longer term. Demand would not only stem from data centres on Earth, but also potentially data centres in space, as well as self-driving cars and humanoids.

The key question then lies on the supply side, where figures like Elon Musk have voiced concerns that production capacity is likely to fail to keep pace with surging demand. Musk has urged both Samsung and Micron to build fabs faster and has said he would guarantee to purchase the output of those fabs. However, he does not think production will match his needs, which is why his proposed TeraFab ambitions extend beyond logic and packaging to include memory.

Nevertheless, TeraFab should not be viewed as a guaranteed solution to ease supply constraints any time soon. Memory manufacturing is highly specialised and requires deep domain expertise, process know-how and execution capability, making the industry extremely difficult to enter. While Musk has a strong track record of entering complex industries which makes success more likely than not, it is not assured. Eventual success would also take considerable time, not only because building, equipping and ramping a fab is a multi-year process, but also because a new entrant must assemble a world-class team, develop process expertise and work through a steep learning curve. Moreover, output may be used primarily to support Musk’s own companies rather than being supplied broadly to third parties.

New supply could also come from existing Chinese suppliers such as CXMT. However, they are generally considered to be several years behind the frontier and geopolitical considerations may make it difficult to participate in existing supply chains. Therefore, it currently looks unlikely that there will be a surprise supply shock in AI-grade memory. As such, if AI demand remains robust and the current build-out continues, supply constraints are likely to persist for many years to come.

Risks to memory demand

One of the larger risks to both the memory sector and the broader AI build-out is energy availability. AI data centres are extremely power-intensive and, given limited growth in power generation and grid capacity across the West, this could constrain deployment. Musk believes that already by the end of 2026 there will be an excess of chips, as there will not be enough available power to turn them all on. It is, however, unclear how power constraints might impact demand and pricing over time, though the effect may be more moderating than destructive, as newer chips are significantly more power efficient, resulting in continued demand as hyperscalers replace older inefficient chips.

In the long run, if orbital data centres become viable, which Musk now believes is three years away, the power constraint would be largely removed. That said, Musk’s timelines are often optimistic, and he himself has said that his timelines are typically set with only a 50% probability of being achieved. Other leaders in the AI space tend to view orbital data centres as something more like a decade away. There may also be other pathways to easing the energy constraints over time, including a faster build-out of nuclear capacity or meaningful breakthroughs in fusion, but these too are likely to take time to scale. As a result, power-related constraints could remain a long-term headwind to AI infrastructure growth and, by extension, the memory market.

Aside from energy, efficiency improvements on both the software and hardware side could moderate memory demand growth. As discussed earlier, architectural innovations such as MoE and distillation are reducing the memory required per unit of useful AI output. If these trends accelerate, for instance, if future models achieve frontier-level performance at a fraction of current parameter counts, the rate of growth in memory demand could slow materially, even if total demand continues to rise. Aggressive quantisation techniques, which have already moved well beyond FP16 to INT8 and INT4 in production inference, further compress memory requirements. On the hardware front, PIM could reduce the need for extreme memory bandwidth by performing certain computations within the memory itself. However, since PIM is being developed by the incumbent memory producers, most notably SK Hynix, it is more likely to enhance their competitive positioning than to disrupt their economics, at least in the medium term.

Finally, there could also be diminishing returns to scaling models, as well as challenges with further AI adoption and monetisation, which could subsequently reduce CAPEX. A scenario in which AI monetisation disappoints and hyperscalers pull back spending, coinciding with the arrival of new fab capacity currently under construction, could produce the kind of oversupply bust that has historically plagued the memory industry.

Conclusion

If the AI secular trend persists, driven by widespread adoption and positive ROI, the memory market could enter a prolonged era of structural tightness rather than repeating historical boom-and-bust cycles. In this scenario, demand would continue to be led by hyperscale data centres, both on Earth and potentially in space, as well as adjacent compute-intensive categories such as full self-driving vehicles and humanoid robotics. This continued demand expansion would keep supply struggling to catch up, compounded by the lengthy timelines for new fabs to become fully operational, thereby maintaining elevated prices and high margins for many years to come. That said, meaningful risks remain, such as energy constraints and potential AI monetisation challenges, which could reduce aggregate memory demand, leading to an oversupply bust. Barring such disruptions, however, the memory market may well be entering a golden age.

At AlphaTarget, we invest our capital in some of the most promising disruptive businesses at the forefront of secular trends; and utilise stage analysis and other technical tools to continuously monitor our holdings and manage our investment portfolio. AlphaTarget produces cutting edge research and our subscribers gain exclusive access to information such as the holdings in our investment portfolio, our in-depth fundamental and technical analysis of each company, our portfolio management moves and details of our proprietary systematic trend following hedging strategy to reduce portfolio drawdowns. To learn more about our research service, please visit https://alphatarget.com/subscriptions/.

Introduction

“We want to put these data centres in space… There’s no doubt to me that a decade or so away we’ll be viewing it as a more normal way to build data centres.”

Sundar Pichai, CEO of Google, December 2025 (Fox)

To many, AI data centres in space may sound like a pie-in-the-sky idea. Yet, a growing number of tech titans, companies and nation states are increasingly viewing orbital data centres as a potential solution to rising energy and infrastructure constraints. The attraction lies in their access to near-constant solar energy, the absence of land and freshwater restrictions and insulation from grid bottlenecks that are already limiting AI data centre expansion on Earth. However, the hurdles to making space-based data centres economically viable at scale remain significant, with launch costs still needing to fall by more than 10x. As a result, space-based compute is not a near-term replacement for terrestrial infrastructure, but a potential longer-term solution, with economic viability most commonly estimated for the early to mid-2030s.

Figure 1: Paul Graham’s and Elon Musk’s exchange on the future of AI data centres

Source: X

In this note, we first examine SpaceX and its role as the primary enabler of the modern space economy. Next, we highlight a set of space data centre initiatives and assess the potential long-term benefits. We then outline the key challenges that must be overcome for space data centres to scale, alongside the early progress made by pioneers such as Starcloud, which recently trained the first AI model in orbit. Finally, we highlight some of the publicly listed companies that have exposure to this emerging field.

SpaceX

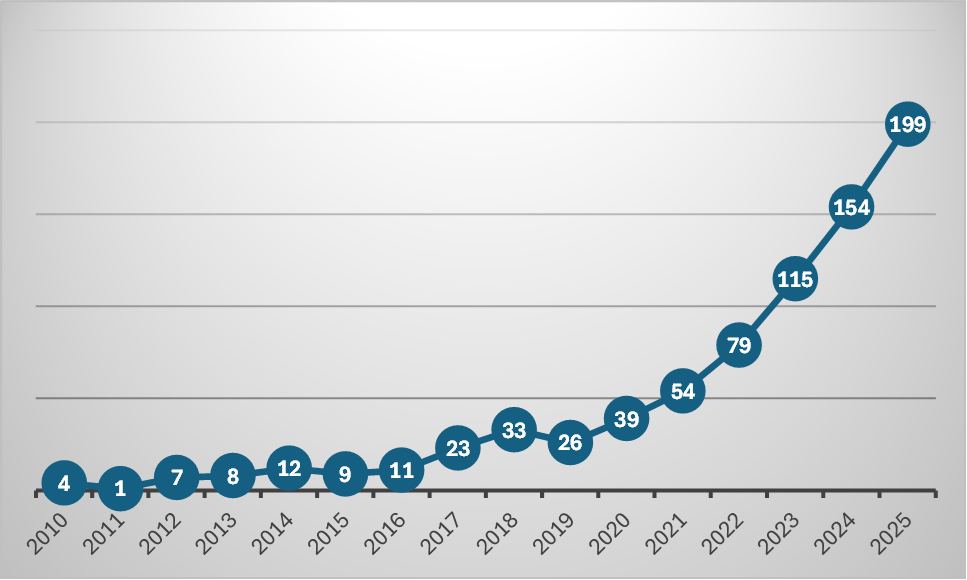

There has been a sharp acceleration in launch activity over the past decade, driven primarily by SpaceX. Founded in 2002 by Elon Musk, the company’s long-term objective was to make life multiplanetary. To pursue this long-term vision, SpaceX first needed to overcome foundational challenges of payload economics and operational scale, while also establishing a sustainable commercial business. A key pillar of this strategy has been to reduce launch costs by recovering and re-flying rocket boosters after launch, first achieved in 2016. This approach is now critical to SpaceX’s operating model, materially increasing launch cadence, lowering the cost per kilogram to orbit and underpinning a wide range of commercial activity in space.

Figure 2: FAA-licensed U.S. Orbital Commercial launches

Source: FAA

The focus on reusability and operational scale has culminated in the development of Starship, SpaceX’s next-generation rocket, designed to be fully reusable. Compared to the Falcon 9 rocket, which can deliver roughly 23 tonnes to low Earth orbit, Starship is expected to carry up to 150 tonnes when fully reusable (250 tonnes in an expendable configuration). This would represent a step-change in payload capacity and fundamentally alter the economics of deploying large assets in space. Starship flights are currently targeted for 2026, with Musk expecting this to increase SpaceX’s share of Earth’s total payload to orbit from around 90% to 98% over the next two years.

Figure 3: Starship

Source: SpaceX Instagram

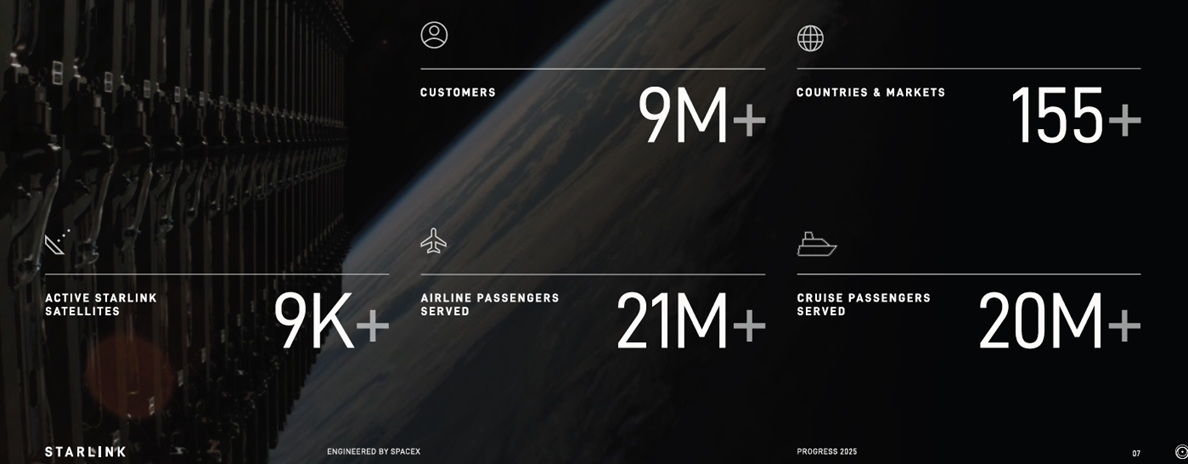

In terms of financials, Musk has commented that SpaceX has been cashflow-positive for many years and that 2025 revenues were projected at US$15.5 billion. Of this, only US$1.1 billion is expected to come from NASA, with the largest revenue contributor being Starlink. Starlink is the company’s satellite constellation which delivers internet access across the globe, typically used in remote and underserved regions. It is also powering in-flight Wi-Fi for major airlines, including United Airlines and Qatar Airways, as well as Wi-Fi for cruise lines such as Royal Caribbean and Carnival.

Figure 4: Starlink data

Source: Starlink 2025 Progress Report

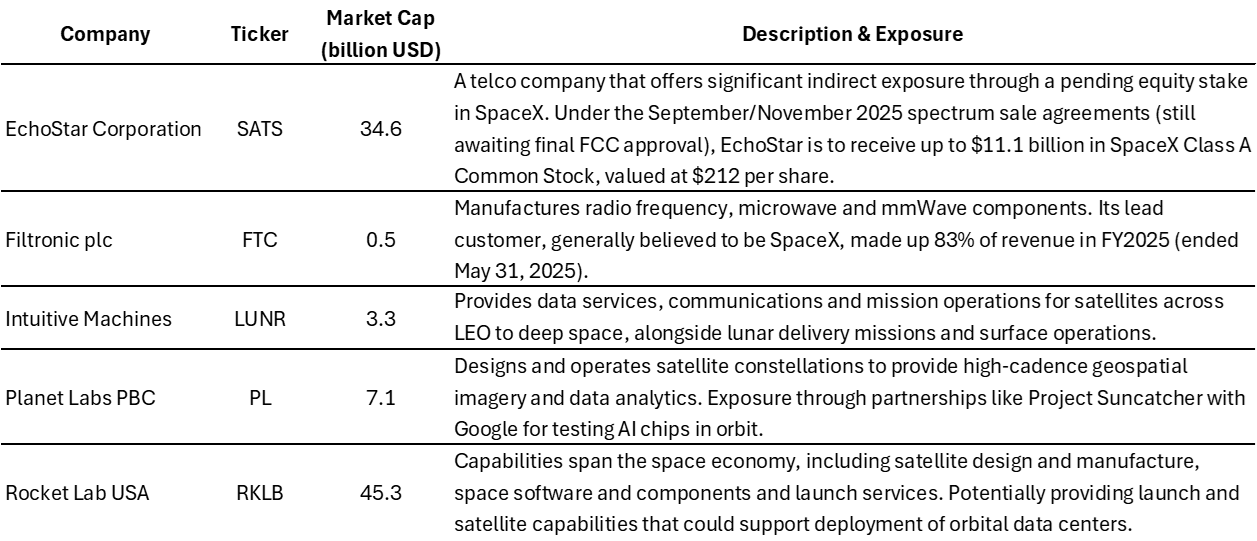

In 2024, Starlink also began deploying satellites with Direct-to-Cell capabilities, aiming ultimately to eliminate worldwide mobile dead zones. The service is currently available in 22 countries with 27 mobile network operator partners such as T-Mobile. Initial Direct-to-Cell capabilities have generally been limited to texting and certain apps, although functionality is expected to expand. Its next-generation Direct-to-Cell satellites will be launched by Starship and within two years it aims to deliver high-bandwidth internet enabling medium-resolution video streaming. This is being enabled by its US$17 billion purchase of spectrum from EchoStar, announced in September 2025.

According to a December 2025 article by Bloomberg, SpaceX is reported to now be targeting a potential US$1.5 trillion IPO in 2026, raising more than US$30 billion. This implies a roughly 100x revenue multiple on 2025 estimates, a valuation that embeds significant optimism around Starship, Starlink growth, and nascent opportunities such as space-based compute. Musk has linked valuation growth to advances in Starship, Starlink, and direct-to-cell spectrum, but to the best of our knowledge has not confirmed the valuation. Musk did however appear to indirectly confirm the IPO plans by responding that “Eric is accurate” on X to an article written by Eric Berger. The article argued that a primary rationale for going public would be to secure a substantial capital injection to fund the development of data centres in space.

A new era of data centres

“I think even perhaps in the four or five year time frame the lowest cost way to do AI compute will be with solar powered AI satellites.”

Elon Musk, SpaceX CEO, November 2025 (U.S.-Saudi Investment Forum)

Beyond SpaceX, interest in orbital data centres is expanding across both the technology sector and among governments. In November 2025, Google outlined plans under Project Suncatcher to deploy solar-powered satellites to house its tensor processing units (TPUs), with prototype launches targeted for early 2027. Eric Schmidt, former Google CEO, indicated that orbital compute formed part of the strategic rationale behind his investment in the rocket company Relativity Space. Nvidia is also investing in the sector by backing the startup Starcloud, which is building space data centres. Jeff Bezos, owner of the space company Blue Origin, has also endorsed the concept, commenting that there will eventually be giant gigawatt space data centres. China’s Zhejiang Lab is also pursuing orbital data centres, aiming to build a network of thousands of satellites to enable AI data processing, having already launched its first batch in May 2025.

Benefits

“We still don’t appreciate the energy needs of this technology…there’s no way to get there without a breakthrough…we need fusion or we need like radically cheaper solar plus storage or something at massive scale like a scale that no one is really planning for.”

Sam Altman, CEO of OpenAI, January 2024 (Bloomberg, Davos)

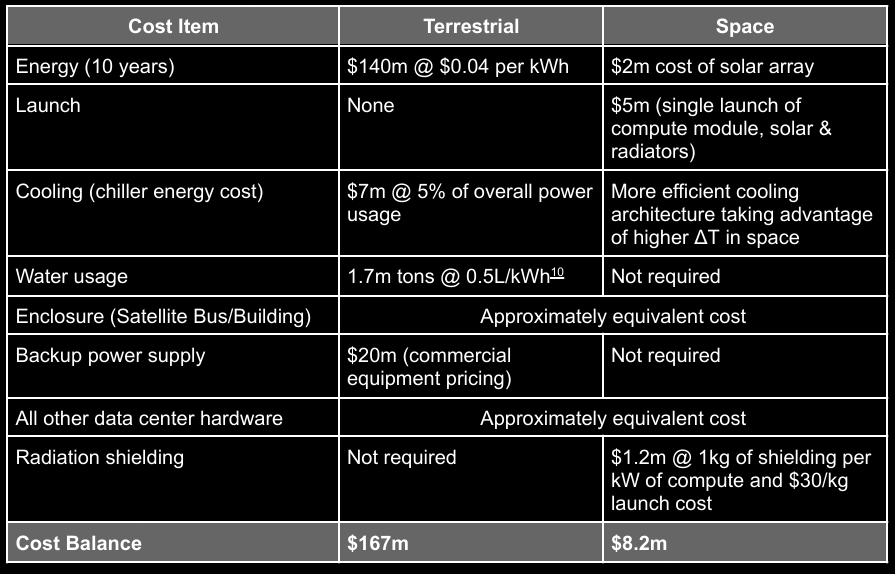

There are several compelling reasons to deploy data centres in space, but energy is the primary driver. AI data centres require vast amounts of power, currently placing significant strains on electricity grids and restricting future expansion. In space, however, data centres will have access to an abundance of solar energy as the satellites can stay exposed to nearly constant sunlight, unaffected by both weather and day-night cycles. This steady energy flow also removes the need for backup-power/batteries. Additionally, sunlight is stronger in space because there is no atmosphere to attenuate and scatter the solar radiation, resulting in peak power generation that is approximately 40% higher than on Earth, even on a clear day. Proponents estimate that solar panels in space could generate roughly 5x more energy than equivalent terrestrial arrays and, when comparing total system costs to prevailing grid electricity prices, it is estimated that energy costs could be around 10–20x lower.

Figure 5: Cost comparison of a single 40 MW cluster operated for 10 years in space vs on land

Source: Starcloud Whitepaper 2024

“The only cost on the environment will be on the launch, then there will be 10x carbon-dioxide savings over the life of the data centre compared with powering the data centre terrestrially on Earth.”

Philip Johnston, Starcloud CEO, October 2025 (NVIDIA blog)

Other benefits of space-based data centres relate to environmental impact. Due to its higher effectiveness, terrestrial data centres are increasingly shifting from air to water cooling, particularly in warmer climates. An EESI article reported that an estimated 80% of the water consumed in such systems is lost to evaporation. It also found that a single large hyperscale data centre may consume up to 1.8 billion gallons of water annually, equivalent to the needs of a town of 10,000–50,000 people. This can create significant pressure on local freshwater sources, such as rivers and aquifers, increasing risks for nearby communities. While the industry is evolving, with some operators deploying closed-loop cooling designs that materially reduce water consumption, locating data centres in space would still eliminate the need for water-based cooling altogether, reducing pressure on local water supplies. In addition, it would remove the need to acquire large areas of land and associated terrestrial infrastructure, while also reducing greenhouse gas emissions.

Challenges and risks

While the theoretical benefits are compelling, the practical hurdles to achieving space-based data centres at scale are substantial and should not be underestimated.

The most significant barrier is launch costs. SpaceX currently charges US$6,500/kg. Starcloud estimates that launch costs will need to fall to US$500/kg before it can break even. Google estimates that launch costs will have to fall to US$200/kg before space data centres become comparable to those on Earth, and expects this to happen by the mid-2030s under the current trajectory. By contrast, Musk is more optimistic, with a 4-5 year timeline for when AI data centres become economically viable and is expecting to get launch costs down well below US$100/kg.

This divergence in timelines (mid-2030s versus 2029–2030) warrants scrutiny. Musk has a well-documented history of ambitious forecasting: Tesla’s Robotaxi was originally promised for 2020, Full Self-Driving has been “one year away” for nearly a decade, and the Cybertruck shipped years behind schedule. Furthermore, the gap between US$6,500/kg today and US$200–500/kg required for viability represents a 10–30x reduction, a transformation that, even under optimistic assumptions, will likely take years to eventuate.

Another challenge is cooling. Space-based data centres cannot rely on terrestrial cooling methods and must instead dissipate heat through radiation. Heat is absorbed by a fluid and transferred to large radiator surfaces, which then emit it into deep space. These radiators must be substantial in size, making them a significant contributor to launch mass and cost, with meaningful design and manufacturing challenges.

Additionally, space does not benefit from Earth’s atmosphere, which helps shield electronics from harmful radiation. As a result, space-based data centres must incorporate mitigation measures such as shielding or error-correcting software to ensure reliable operations. Maintenance, logistics and regulation also remain meaningful hurdles. Unlike terrestrial data centres, failed components cannot easily be replaced or upgraded once deployed in orbit. In parallel, operators must manage orbital debris risk, navigate unclear data sovereignty and security frameworks for space-based compute and address concerns from regulators and astronomers around orbital congestion and interference with scientific observation.

Early progress

“Anything you can do in a terrestrial data centre, I’m expecting to be able to be done in space.”

Philip Johnston, Starcloud CEO, December 2025 (CNBC)

Despite these challenges, initial progress is already being made. In November 2025, Starcloud deployed the first Nvidia H100 GPU to space via SpaceX. In December, it was announced that it had trained the first LLM in space and had successfully run inference on it. The purpose was to prove that their thermal management and radiation shielding techniques allowed them to operate state-of-the-art in the harsh conditions of space. Going forward, Starcloud will continue to deploy more advanced satellites, with their second launch planned for October 2026, which will be at least 10x more powerful.

In their 2024 white paper, Starcloud outlined a vision for an eventual 5 gigawatt orbital data centre, with massive solar and radiators which would be approximately 4 kilometres in width and height. This equates to over 2000 football fields. However, the leap from a single GPU to a 5 GW orbital installation is immense, and investors should treat such projections as aspirational rather than operational guidance.

Figure 6: Illustrative concept of Starcloud’s proposed 5GW orbital data centre

Source: Starcloud

To the moon

“The Moon is a gift from the universe.”

Jeff Bezos, October 2025 (Italian Tech Week)

While initial orbital data centres rely on Earth-launched satellites, the ultimate vision extends to the Moon. Jeff Bezos has long advocated for moving heavy industry off Earth to preserve the planet, highlighting the Moon’s low gravity, proximity and resources for fuel and infrastructure. Additionally, President Trump’s December 2025 Executive Order calls for Americans to return to the Moon by 2028 and establish initial elements of a permanent lunar outpost by 2030. Musk’s longer-term ambitions aligns with this, envisioning factories on the Moon that use local resources to produce data centre satellites in vast quantities, potentially exceeding 100 terawatts of compute capacity. Due to the Moon’s low gravity, these satellites would be launched directly into orbit via mass drivers (electromagnetic railguns), dramatically lowering costs, enabling massive scale and unlocking a future of vast orbital AI compute.

Listed companies in the space

Below we highlight some publicly listed companies that have direct or indirect exposure to space data centres.

Figure 7: Public companies with exposure to space data centers

Source: SimplyWallSt.

Note: Market cap.as of 9 Jan 2026

Conclusion

The concept of data centres in space is now moving from speculation to early experimentation as AI compute demand collides with hard, Earth-based constraints. While the challenges ahead remain substantial, with launch costs still representing a major barrier, early demonstrations and growing engagement from technology leaders and governments show this is no longer a fringe idea. However, this trajectory is not inevitable. Breakthroughs elsewhere could eliminate the need for space-based compute, whether through fusion delivering radically cheaper terrestrial energy or major gains in algorithmic or hardware efficiency. Yet, under today’s technological landscape, space-based data centres represent the most direct path being actively pursued to unlock the scale of energy required for future AI infrastructure.

At AlphaTarget, we invest our capital in some of the most promising disruptive businesses at the forefront of secular trends; and utilise stage analysis and other technical tools to continuously monitor our holdings and manage our investment portfolio. AlphaTarget produces cutting edge research and our subscribers gain exclusive access to information such as the holdings in our investment portfolio, our in-depth fundamental and technical analysis of each company, our portfolio management moves and details of our proprietary systematic trend following hedging strategy to reduce portfolio drawdowns. To learn more about our research service, please visit https://alphatarget.com/subscriptions/.

Introduction

Although there are still concerns that the AI market may be in a bubble, industry investment continues at pace with little sign of slowing. Hyperscalers continue to guide towards record capital expenditure, consultancies report surging AI bookings, and enterprise software vendors are racing to embed agentic capabilities into their platforms. As with other major technology shifts, it will take time before the returns from this investment become fully visible. Even so, for investors it remains important to monitor how AI is being adopted and used in practice, as this helps assess whether current developments are on track to realise its long-term promise.

In this note, we focus on enterprise AI and examine how organisations are moving beyond chatbots and narrow point solutions to embed AI deeper into business workflows. In the first section, we look at AI adoption and find that it is widespread but generally remains shallow, with most organisations in the early phases of deployments. We then explore the key challenges and limitations shaping adoption, where reliability currently remains an important constraint. Finally, we assess the rise of AI platforms and the emerging competition to become the organising layer for enterprise AI.

Overall, the evidence points to early signs of real value being realised, even if deployments remain constrained at this stage. Given the early stage of the cycle and the pace of ongoing advances, we remain optimistic that enterprise AI is on a healthy trajectory with substantially more value still to be unlocked.

Enterprise adoption

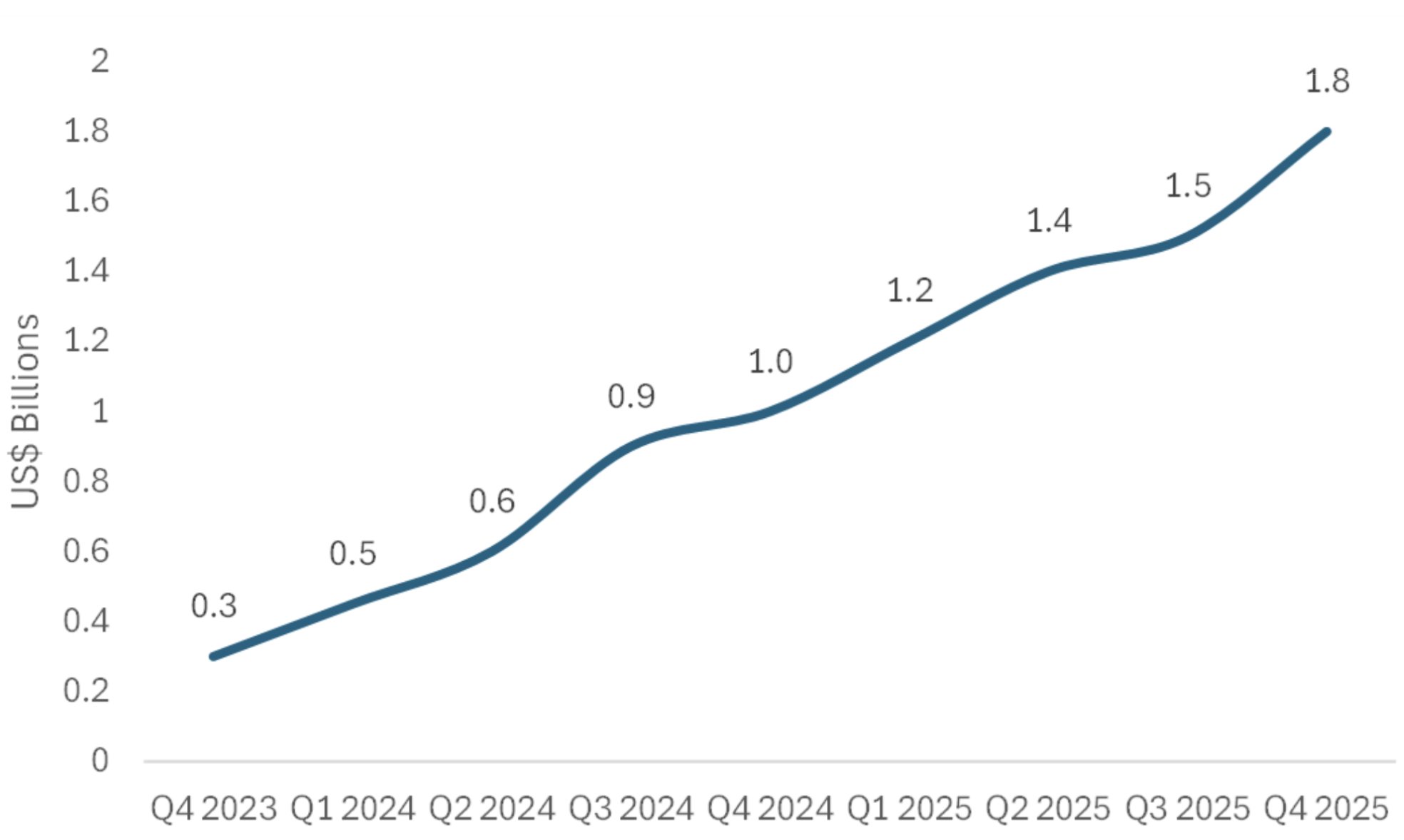

In general, enterprises appear eager to embrace AI. A McKinsey study which surveyed organisations in the summer of 2025 found that 88% use AI in at least one business function, up from 78% a year ago. Additionally, we have observed strong demand for AI services at consultancy companies such as Accenture and Capgemini. Accenture reached US$1.8 billion in AI bookings in Q4 2025 (ended 31 August) and for FY25 it achieved US$5.9 billion, nearly doubling year-over-year and representing over 7% of total company bookings. Similarly, Capgemini’s AI bookings in Q3 2025 (ended 30 September) were 8% of total bookings. This shows growing willingness among enterprises to commit budget to AI initiatives.

Figure 1: Accenture’s Generative AI Quarterly Bookings (Fiscal Year end August)

Source: Accenture

However, breadth of adoption does not imply depth. Most enterprise AI deployments remain incremental rather than transformative. These deployments are typically augmenting existing workflows with copilots and chatbots rather than fundamentally redesigning processes or enabling entirely new capabilities.

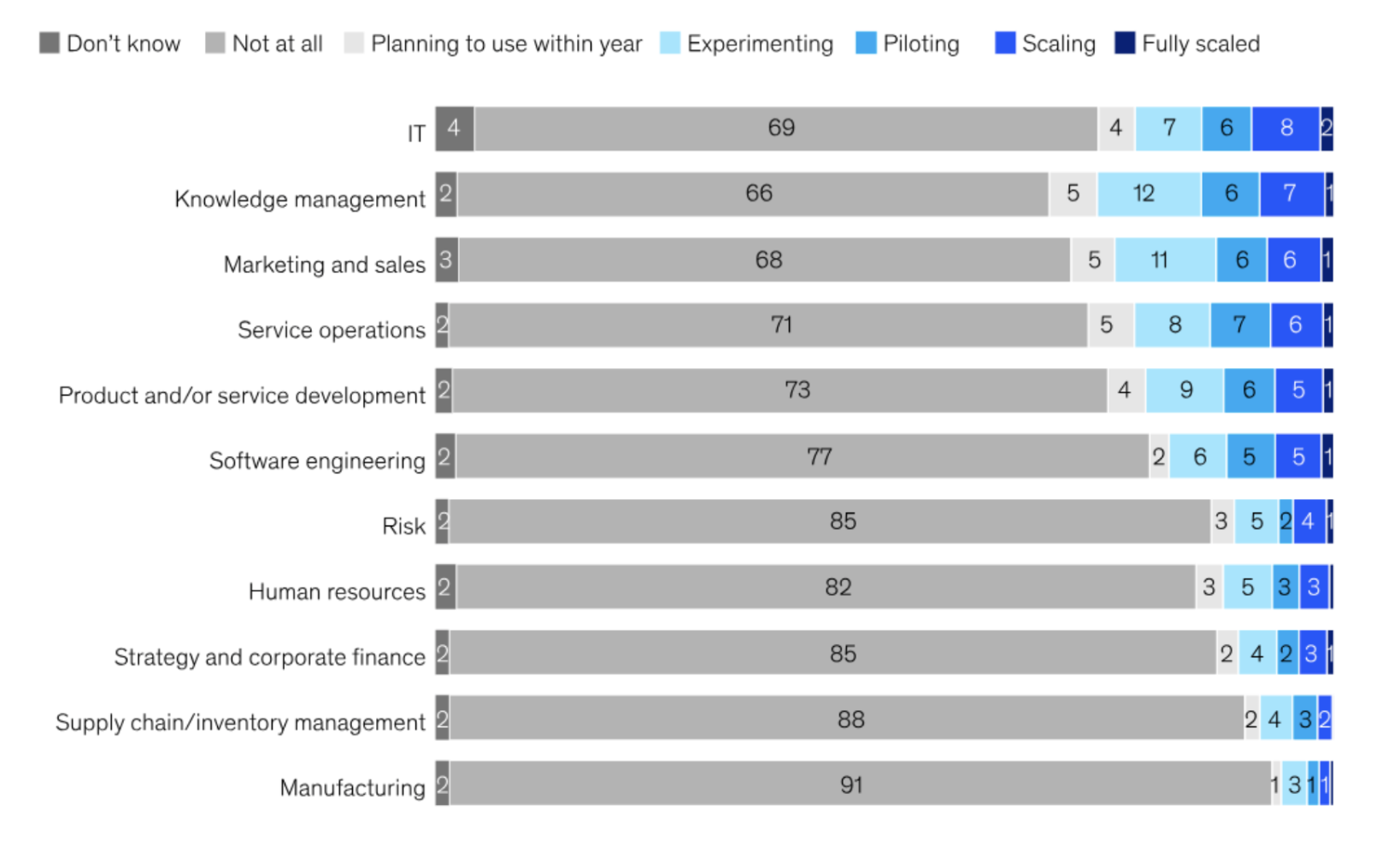

This shallow pattern is evident in the data. According to the McKinsey study, 62% of organisations are still in the AI experimentation and piloting phase, whereas just 7% have AI fully deployed and integrated across their organisation.

In terms of deeper AI deployments using agents (systems that autonomously execute multi-step tasks using a model and tools within defined constraints), only 23% of organisations report that they are scaling these somewhere within their organisation, though usually limited to 1-2 business functions. An additional 39% of organisations are still experimenting. The relative scarcity of agentic deployments underscores the gap between current practice and AI’s fuller potential: agents represent a shift from AI as a tool that assists human workers to AI as a participant that executes tasks with meaningful autonomy.

Figure 2: Phase of AI agent use by business function (% of respondents)

Source: McKinsey

Challenges, constraints and value

The fact that adoption remains shallow reflects not only the early stage of the cycle, but also a range of practical challenges and constraints.

Organisational readiness: Many departments lack leadership ownership for AI initiatives, as these often involve upfront costs and the redesign of workflows, which can represent a short-term burden. Resistance to change is compounded by the lack of talent to both understand which workflows can be optimised and the ability to implement these changes.

Technical foundations: Many companies lack the necessary data infrastructure and carry significant technical debt that limits their ability to support AI initiatives. Clean, accessible, well-governed data is a prerequisite for most meaningful AI applications, yet remains elusive for many organisations.

Risk and governance: Finally, there is ongoing hesitation driven by concerns around data privacy and cybersecurity risks.

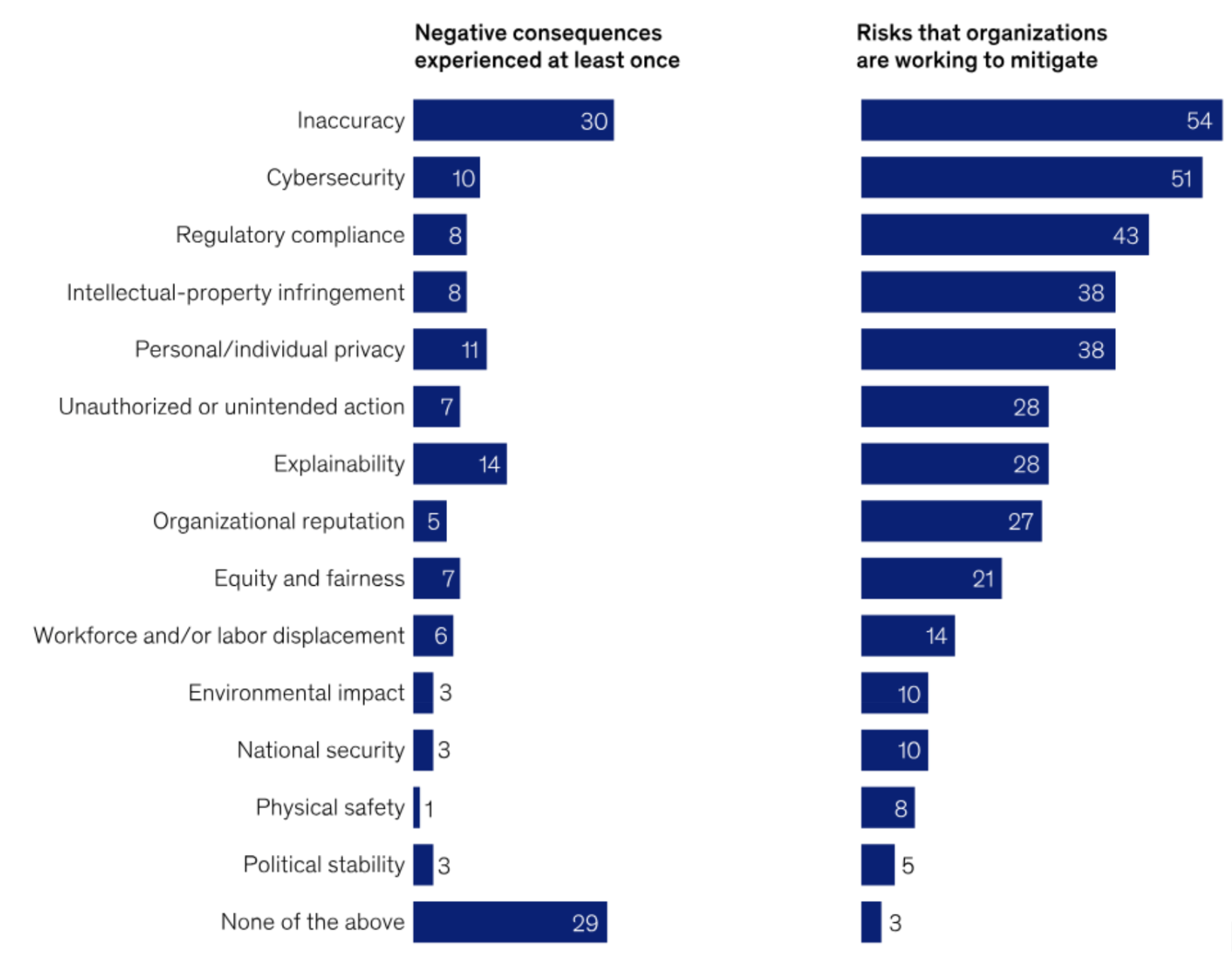

Once AI initiatives are implemented, organisations often face a range of issues that they need to mitigate, with inaccuracies and explainability emerging as the two factors associated with the most negative consequences. Inaccuracy risk is particularly acute for customer-facing or regulated applications, where errors carry reputational or compliance costs. Explainability, specifically the ability to understand and justify why an AI system produced a given output, matters both for internal trust and external accountability.

Figure 3: AI-related negative consequence and risk mitigation in the past year (% of respondents)

Source: McKinsey

Reliability and Architectural Considerations

In the study “Measuring Agents in Production” (December 2025) by researchers from UC Berkeley, Stanford University and IBM Research, 68% of production AI agents perform at most ten steps autonomously before human intervention. This is to manage reliability, computational time and costs. Additionally, 74% of teams rely on human-in-the-loop oversight or feedback to judge the agent’s answers. This is because it is difficult to automatically evaluate whether the AI is performing correctly for specialised business tasks, so companies still lean on users to review results.

Due to reliability and cost considerations, enterprises are increasingly deploying AI agents within deterministic workflow scaffolding rather than granting full autonomy. Most production systems fix the sequence of steps an agent must follow and limit model discretion to bounded stages A common example is an insurance agent that always moves through coverage lookup, medical-necessity review and risk checks in a fixed order, even though the model may still improvise within each subtask. This pattern is reflected in the Measuring Agents in Production study, which finds that 80% of real deployments use structured, stepwise flows. This approach improves predictability, auditability and cost efficiency by reducing variance, unnecessary model calls and failure loops. As a result, enterprises are typically deploying LLMs inside deterministic shells, particularly in standardised and regulated processes, rather than relying on free-form autonomous agents.

Despite current challenges and limitations, available evidence suggests AI agents are already delivering meaningful productivity benefits. In-depth interviews in the Measuring Agents in Production study reveal that organisations tolerate minutes-long execution times because agents still outperform human baselines on the tasks they replace. Among practitioners with deployed agents who evaluated alternatives, 82.6% preferred the agentic solutions for production deployment, indicating superior performance relative to non-agentic approaches. Consistent with this, McKinsey found that 39% of organisations using AI report some impact on EBIT at the enterprise level, suggesting that early productivity gains are beginning to translate into financial outcomes.

AI platform wars

“The CEOs are kind of getting the picture. Many of them have killed these proof-of-concept scenarios. One had 900 proof-of-concepts going on in the company and said it was uncontrollable, and they killed them all. And they went with ServiceNow.”

Bill McDermott, ServiceNow CEO, Q3 2025

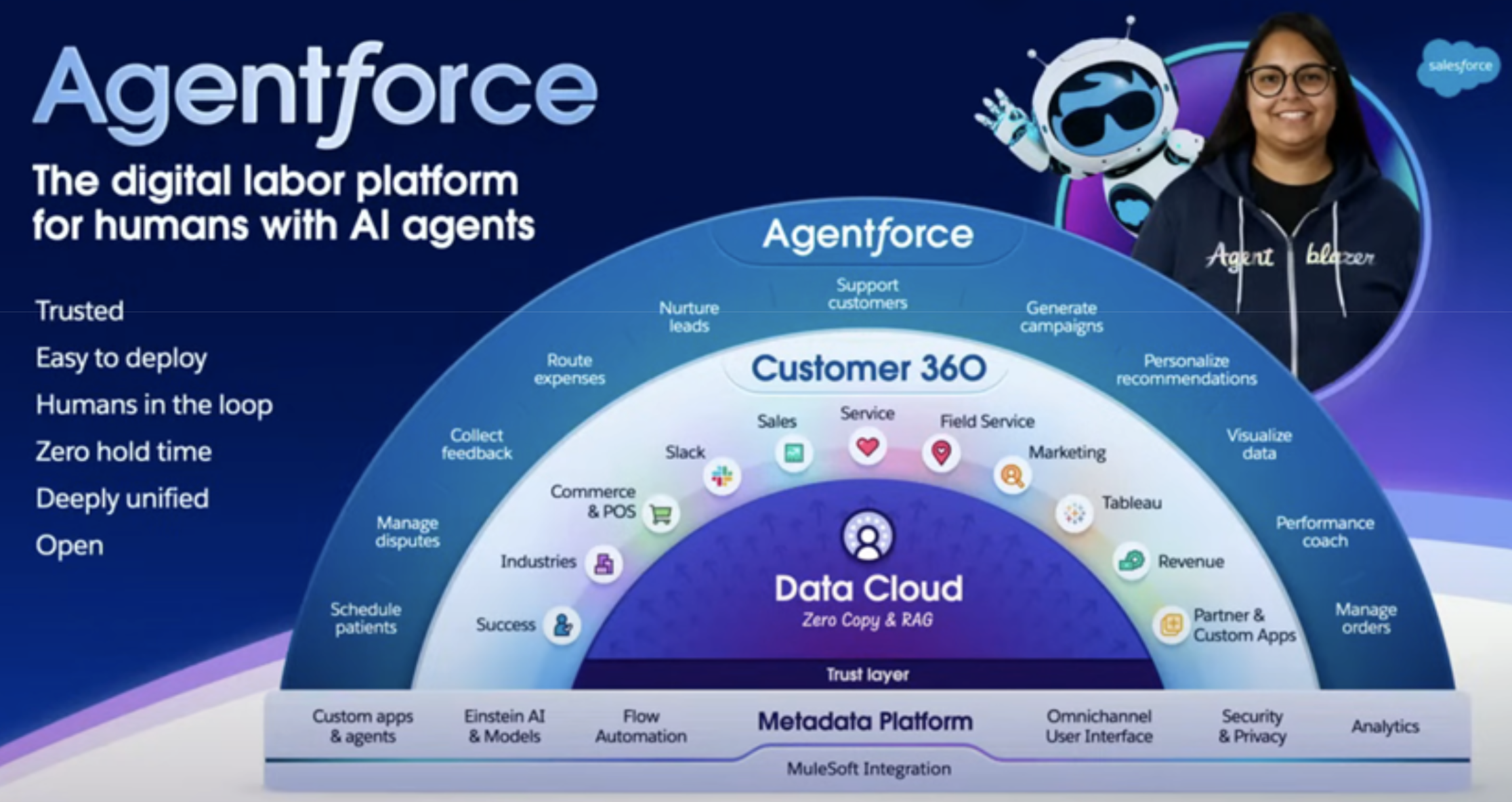

Due to the challenges of building and implementing in-house agents, many organisations have now turned to their existing software vendors such as ServiceNow and Salesforce who offer out-of-the-box agents and AI platforms. These solutions require less setup, less in-house expertise and benefit from agents being tightly integrated with the workflows of the parent system.

ServiceNow

ServiceNow, which now calls itself the “AI Platform for business transformation,” offers a wide range of AI solutions to help optimise workflows across areas such as IT, CRM and HR. Not only does it let customers build their own agents, but it also has over 100 pre-packaged agentic workflows. Additionally, ServiceNow offers the AI Control Tower product, which provides visibility, governance, security and centralised control. Furthermore, its AI Agent Fabric product, a communication and integration layer, allows connections to third party AI agents and tools to enhance agentic workflow orchestration.

In its Q3 2025 (ended September) earnings call, ServiceNow management stated that its AI products are on track to exceed US$500 million for the full year. For FY2026 the company is targeting US$1 billion in AI product revenue, though it is aiming to surpass this target.

Salesforce

Similarly, Salesforce has also gone all-in on becoming a leading AI platform company. This transition underpinned its recent US$8 billion acquisition of the data cloud management company Informatica, as it sought to strengthen its data infrastructure to support agentic workflows. Salesforce also rebranded many of its core offerings with the Agentforce label, where Sales Cloud is now Agentforce Sales, Service Cloud is now Agentforce Service, and the platform layer was renamed to Agentforce 365 Platform.

According to reporting from Business Insider, Salesforce CEO Marc Benioff said customers no longer want to talk about “the cloud” and instead frame their needs primarily around AI agents, a shift Salesforce validated in pre-Dreamforce focus groups. The rebrand appears to be so significant that Benioff even told Business Insider he “would not be shocked” if Salesforce ultimately renamed the entire company to Agentforce.

During its annual Dreamforce conference in October 2025, Salesforce provided several examples of global enterprises already deploying its new AI capabilities in production. Case studies included Williams-Sonoma, Pandora, PepsiCo, FedEx and Dell.

The Williams-Sonoma case study demonstrated how Agentforce can power fully branded consumer-facing agents, launching “Olive,” an AI sous-chef, built in just 30 days. It provides personalised recipe guidance, tutorials and product-aware recommendations based on items a customer already owns.

In the PepsiCo case study, Salesforce showed how Agentforce enhanced sales, marketing and field operations by embedding agents directly into customer and employee workflows. Prospective retailers now receive personalised product recommendations and automated follow-up emails, while sellers use Slack-based insights to prepare for meetings and identify risks. Field technicians benefit from pre-work briefs, real-time troubleshooting and automatic post-work summaries, with Agentforce also highlighting bespoke upsell opportunities to drive incremental revenue.

The FedEx case study highlighted efficiency improvements when its customers ask sales reps complex international-shipping questions. Previously, when reps posed these queries to their chatbot they would often get back a 200-page tariff PDF that they would have to wade through to get an answer. Now however, it instead returns precise and complete shipping recommendations while governance rules mask sensitive data.

The case study also showed that when employees received a new laptop that was not properly set up (e.g. missing apps), they could simply describe this issue to Agentforce in Slack and have the device automatically configured without the need to raise an IT ticket.

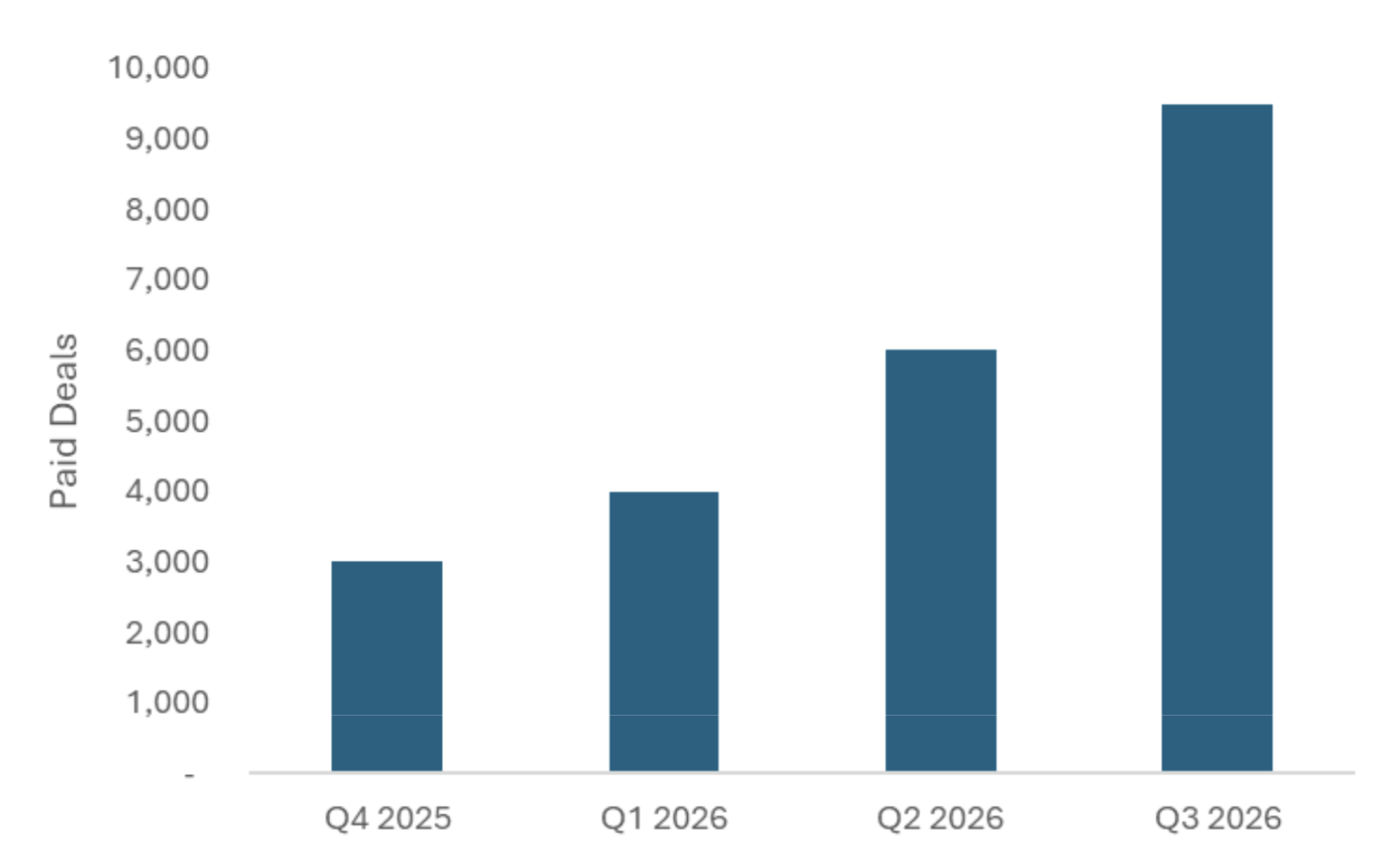

The early success of Salesforce’s AI products is reflected in its numbers. Agentforce ARR (annual recurring revenue) surpassed US$0.5 billion in its Q3 2026 (ended 31 October), growing 330% year-over-year with 9,500 paid deals. Agentforce accounts in production also grew 70% quarter-over-quarter and 50% of its Agentforce and Data 360 bookings came from existing customer expansion, indicating early product-market fit as customers continue to build on and expand these deployments rather than abandoning them.

Figure 4: Salesforce’s paid deals for Agentforce (Fiscal Year end January)

Source: Salesforce

AI platform competition

Looking ahead over the medium term, we expect demand for AI platforms embedded within existing enterprise software to remain strong, reflecting customer appetite for agentic capabilities within established workflows. However, given organisations often have a vast number of different software vendors, this is likely to result in organisations operating multiple AI platforms across different software ecosystems. For example, FedEx utilises both Salesforce’s and ServiceNow’s AI platforms in production.

However, the true unlock lies in enabling agents and workflows to operate seamlessly across the entire organisation rather than remaining confined to the parent ecosystems. This capability is increasingly a stated goal of leading platforms, and we are already seeing moves in this direction, for example through Salesforce’s integration with Microsoft Teams. Achieving this cross-enterprise orchestration would meaningfully strengthen platform positioning, stickiness and long-term value capture.

This prize is therefore driving intensifying competition. It is not only ServiceNow and Salesforce that are pursuing this role, but also a range of other players seeking to become the organising layer for enterprise AI, ranging from Google with Gemini Enterprise to platforms such as Dataiku and UiPath, each approaching the opportunity from a different angle. Microsoft, with its Copilot suite deeply integrated across Office 365 and Azure, represents perhaps the most formidable competitor given its existing enterprise footprint.

However, delivering true cross-enterprise orchestration remains difficult in practice. Each organisation has a unique application landscape, data architecture and workflow structure, and it is not yet clear whether any single platform will be able to integrate deeply enough across the full enterprise to meet most use cases. If platforms struggle to operate across the enterprise, companies may increasingly need to build custom in-house agents and agentic systems to bridge gaps to coordinate end-to-end workflows.

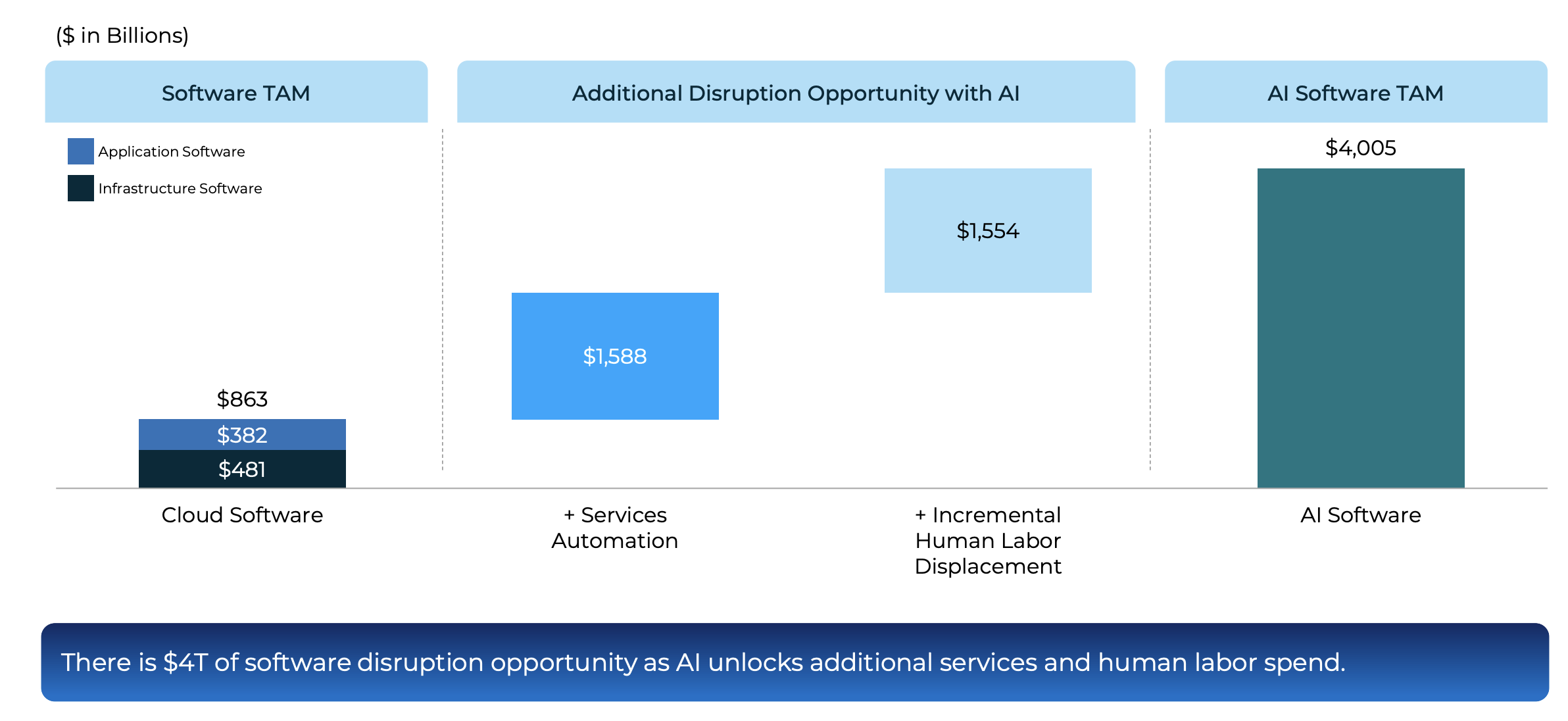

Sizing the opportunity

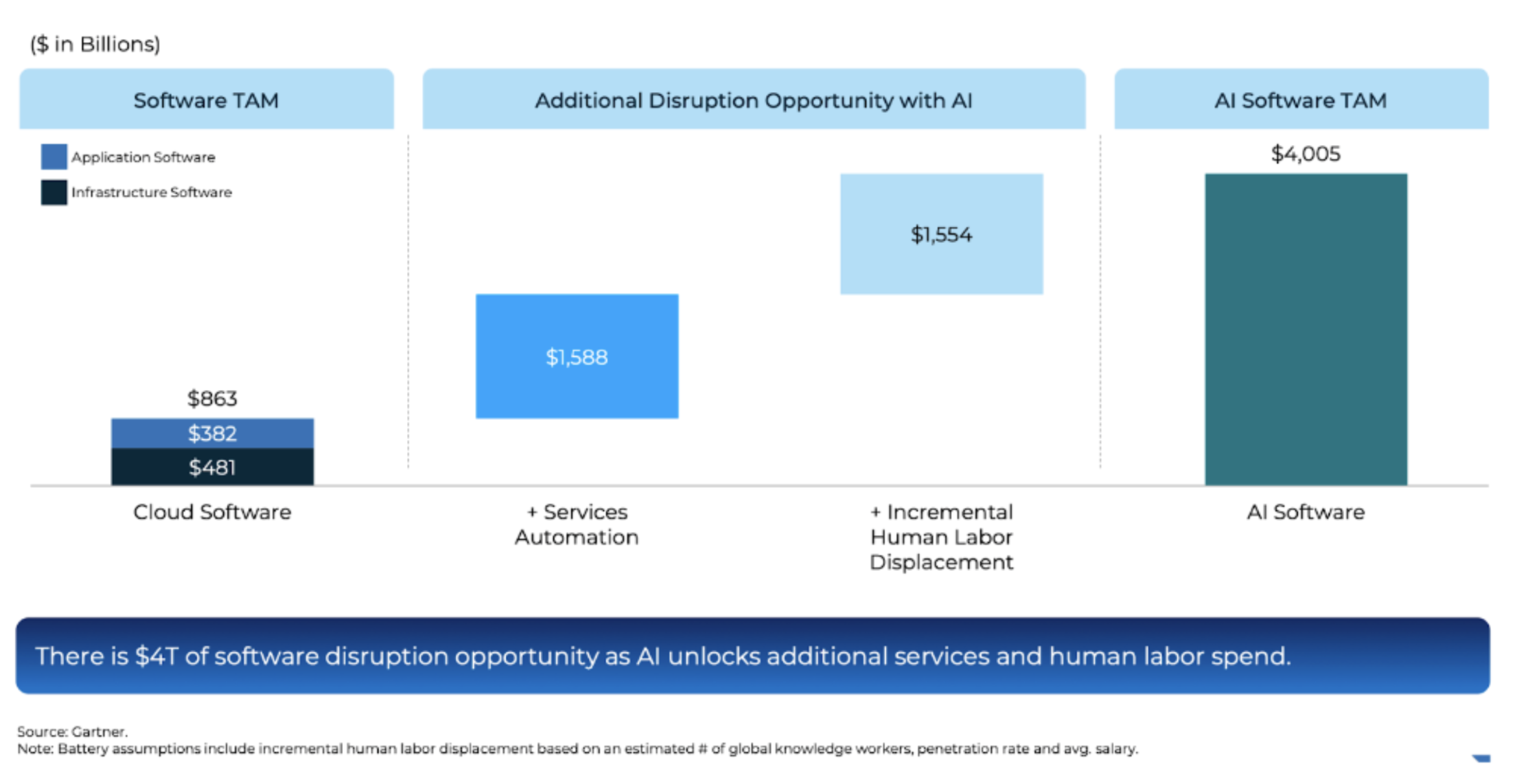

While precise market sizing for enterprise AI remains difficult given the early stage of adoption, the scale of the opportunity is clearly substantial. Battery Ventures estimates that AI could underpin a roughly US$4 trillion software opportunity by expanding traditional application and infrastructure markets while also unlocking incremental services automation and partial displacement of human labour.

Figure 5: The massive AI market opportunity

Source: Battery Ventures, State of the OpenCloud

Conclusion

The evidence points to early but tangible signs of value realisation in enterprise AI, even as adoption remains uneven and constrained by practical challenges. Many organisations remain in the experimentation and piloting phases, with limitations around reliability, integration, talent availability and human oversight persisting. There are, however, clear indications of productivity gains and, in some cases, early impacts on EBIT. That said, it remains too early to determine whether these gains will ultimately justify the substantial capital expenditures being made across the sector. Nevertheless, the progress to date is encouraging, particularly when viewed against historical precedent, as it took roughly 15 years before PCs drove widespread productivity improvements across the economy.

Looking ahead, we expect continued progress as multiple reinforcing factors improve. Advances in hardware and models should increase capability while lowering costs, while accumulated implementation know-how and more mature architectures should reduce execution risk. Together, these dynamics are likely to expand the set of economically viable use cases and support further deployment of both custom-built agents and AI platforms embedded within enterprise workflows.

The eventual structure of the enterprise AI landscape remains uncertain however, especially amid the intensifying “AI platform wars” as vendors vie for dominance in cross-enterprise orchestration. Over the long run, it is unclear whether organisations will persist with multiple AI platforms siloed across different software ecosystems, converge on a single governing platform, or lean more heavily on custom in-house agentic systems to achieve seamless integration. That said, we expect forward-thinking enterprises to increasingly pursue agentic workflows that span the entire organisation, unlocking deeper efficiencies and strategic advantages.

Overall, while still early in the cycle, the foundations for realising substantial value from enterprise AI are beginning to take shape.At AlphaTarget, we invest our capital in some of the most promising disruptive businesses at the forefront of secular trends; and utilise stage analysis and other technical tools to continuously monitor our holdings and manage our investment portfolio. AlphaTarget produces cutting edge research and our subscribers gain exclusive access to information such as the holdings in our investment portfolio, our in-depth fundamental and technical analysis of each company, our portfolio management moves and details of our proprietary systematic trend following hedging strategy to reduce portfolio drawdowns. To learn more about our research service, please visit https://alphatarget.com/subscriptions/.

“We are also on the cusp of something really tremendous with Optimus, which I think is likely to be, has the potential to be, the biggest product of all time.”

Elon Musk, Tesla CEO, Q3 2025

Introduction

Over recent years, humanoid robots have made tremendous progress driven by advances in hardware and AI. Dozens of companies have entered the space and several are now moving from proof of concept toward mass production. The goal is to build machines that can take on physical labour, performing the dull, dangerous and dirty tasks that humans would rather avoid, both in factories and in households. If this vision can be achieved, many founders and analysts alike expect that over the long run there will be billions of humanoids on the planet, becoming as ubiquitous as cars and smartphones.

Yet the path ahead remains full of challenges. Most notably, general robotics has not yet been solved. Although today’s humanoids can perform demonstrations that look impressive, from dancing to doing backflips, they still struggle with tasks humans find trivial such as folding clothes. They also lack the general adaptability needed to operate reliably in unfamiliar environments. Ultimately, the ability to carry out practical real-world tasks while adapting to changing conditions and requirements will determine how widespread and impactful these robots can become.

In this note we first discuss the benefits of general-purpose humanoids and compare them with specialised robots. We then profile three prominent players in the space: Tesla, 1X and Figure AI. Next we examine the challenges humanoids must overcome to become truly general-purpose and reach scale. Finally, we outline the size of the opportunity and discuss how the emerging humanoid market could evolve.

The case for humanoid robots

There has long been debate over why general-purpose humanoids are needed when specialised machines can often perform individual tasks better. We agree that in many cases specialised robots will be superior and they are not going away. However, many of the tasks that need to be performed are varied, intermittent and carried out in conditions that change from moment to moment. Humans are still far better suited to this kind of dynamic work and that is exactly the type of role a humanoid is designed to take on.

A useful comparison is the smartphone, which combines voice calls, photography, gaming, payments and email into one device rather than relying on separate specialised equipment. While dedicated equipment might outperform it in specific areas, most people prefer the convenience of a single, versatile device over carrying multiple specialised ones.

Versatility can also be a direct advantage for certain tasks. For example, a robot vacuum cannot move a chair to clean under a table, whereas a humanoid can. In such scenarios the general-purpose solution is not only more convenient but also more capable.

Compared to specialised robots, humanoids can also be produced in much higher volumes given the potential demand, benefiting from economies of scale through in manufacturing and supply chains. Greater scale also spreads R&D costs across more units, driving down overall unit costs.

The human form factor is also an advantage. Most physical environments are designed around human proportions, so humanoids can naturally operate equipment and move through spaces built for people. In addition, the human form factor is far easier to train. Humanoids can learn directly from human demonstrations, including motion capture data, which provides high-quality examples of how tasks should be performed. Other form factors lack a human equivalent to learn from, making training significantly more difficult.

Tesla – Optimus

“Optimus, I think, probably achieves five times the productivity of a person per year because it can operate twenty-four seven.”

Elon Musk, Tesla CEO, Q3 2025

Optimus is Tesla’s humanoid robot and today roams parts of the company’s headquarters unsupervised. Musk has said it can guide visitors through the building and already performs some tasks inside Tesla’s factories. Optimus 2.5 was also publicly showcased at the Tron premiere in October 2025 where it demonstrated Kung Fu.

Figure 1: Optimus 2.5 doing Kung Fu at the Tron premiere in October 2025

Source: Youtube – DPCcars

It is difficult to know exactly how Optimus compares with its peers, as the field is currently in a fog of war. Afterall, most humanoids are not yet deployed widely in the real world and companies in the sector remain highly secretive about their latest capabilities. Many demonstrations rely on varying levels of teleoperation, which makes it hard to assess what is truly autonomous. Musk has commented that at least one of Optimus’s Kung Fu demonstrations was AI-driven rather than teleoperated, although the limited transparency in the sector still makes broader comparisons challenging.

Despite this limited transparency, Tesla remains widely regarded as a frontrunner in the humanoid race. Early investors and industry veterans with inside access to multiple projects consistently rank Tesla highly on technical grounds, even though their visibility is still partial. Additionally, Tesla’s extensive real-world AI experience, strong electrical and mechanical engineering capabilities and considerable manufacturing scale give the company a credible path to solving the field’s twin challenges of intelligence and manufacturing. Tesla is also well capitalised and led by a founder with an exceptional track record of tackling hard problems and creating value.

Tesla is now working on Optimus 3, which Musk describes as a giant improvement over Optimus 2.5. He has said they will probably unveil a production prototype in Q1 2026 and that it will seem like a person in a robot suit with agility roughly equal to an agile human. Scale production is now aimed to start at the end of 2026, though it will take a while to ramp up production.

“Optimus at scale is the infinite money glitch.”

Elon Musk, Tesla CEO, Q3 2025

The medium and long-term targets are highly ambitious. Optimus 3 is aimed at reaching 1 million units per year before 2030 at a target cost of less than US$20,000. Optimus 4 is planned to reach 10 million units annually with production starting in 2027. Optimus 5 is planned to reach 50-100 million units annually with production starting in 2028. Musk expects that humanoids will eventually account for 80% of Tesla’s value in the long run.

1X – Neo

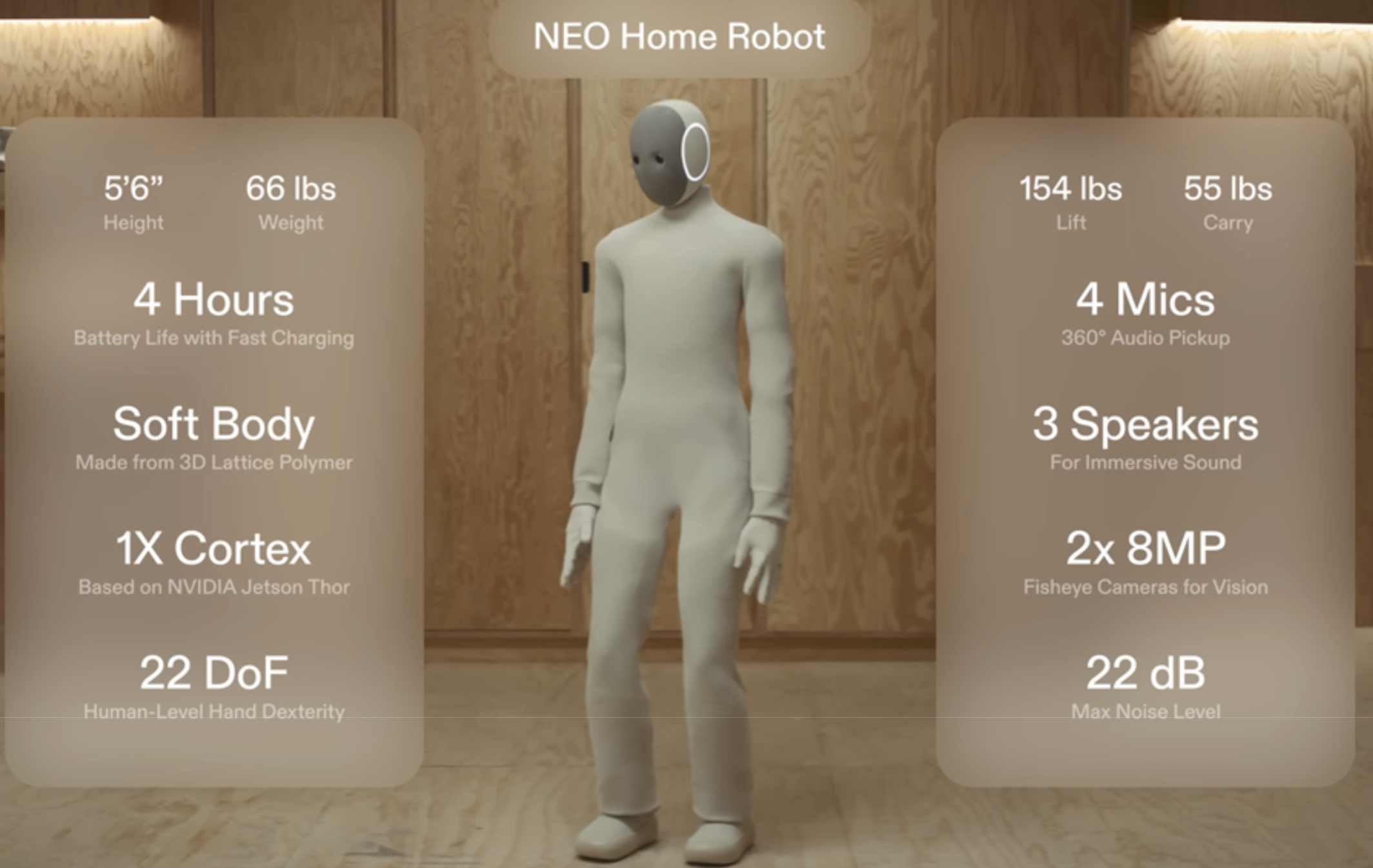

A dark horse in this space is 1X, which was founded in Norway in 2014 and later relocated its headquarters to Palo Alto. In 2023 it raised US$23.5 million in a Series A2 round led by OpenAI, followed by a US$100 million Series B in 2024. It is now reportedly seeking a further US$1 billion, according to The Information.

Figure 2: Neo Home Robot by 1X

Source: 1X

1X gained considerable attention in October 2025 when it announced early-access pre-orders for its home robot, Neo. This is a lightweight humanoid designed to help with chores and act as a companion, allowing users to ask questions such as where they last left their keys. US deliveries are set for 2026, with early access priced at US$20,000 or US$499 per month.

Unlike most competitors, which target more industrial settings, 1X’s focus on households presents greater safety challenges and far more environmental complexity. The company’s rationale for this, however, is that it believes consumer products scale much faster and that the variety of environments will deliver richer data, helping Neo improve more quickly.

Neo’s design centres on safety and lightness. Its finger strength matches that of a human, which is by design, because if it was excessively strong it would lose sensitivity. Instead of using traditional gear-based mechanisms commonly found in most robots, 1X has developed a proprietary actuation system called Tendon Drive, which uses high-torque motors pulling synthetic tendons inspired by biological muscles. This patented system enables smooth, quiet and safe movements while keeping the robot lightweight. It also makes the robot simpler and cheaper to produce, which has enabled 1X to already be able to manufacture Neo at an affordable cost.

Coinciding with the Neo launch announcement, the Wall Street Journal released a video interview with CEO Bernt Øyvind Børnich, showing Neo performing basic home tasks. It took around one minute to fetch a glass of water and roughly five minutes to load three items into a dishwasher. However, all demonstrations were revealed to have been conducted using teleoperations, where a human was in the loop helping the robot operate and make decisions. The video triggered an online backlash, as many felt the announcement was overhyped given Neo was not yet capable of acting autonomously.

“So when you get your Neo in 2026, it will do most of the things in your home autonomously. The quality of that work will vary and will improve quite fast as we get data.”

Bernt Øyvind Børnich, 1X CEO, October 2025 (WSJ)

Børnich explained however that the model shipping in 2026 will be more advanced than the one shown in the interview and mostly autonomous. Nevertheless, it will still rely to some degree on teleoperation or assistance from the owner’s voice commands for more challenging tasks. This will enable the robot to learn. Børnich reiterated that the version shipping in 2026 is meant for early adopters who understand the product is not perfect but insisted it will quickly become very useful. He has also noted that the Neo unit currently in his own home can autonomously retrieve a Coke from the fridge successfully around 50% of the time.

By 2027, 1X aims to ship 100,000 units, by which time Børnich expects Neo to be fully autonomous with no human in the loop. By 2028, he expects shipments to reach 1 million units, which is even more ambitious than Tesla’s target for Optimus 3.

Figure AI – Figure

Despite being founded in 2022, US-based Figure AI is already considered one of the frontrunners in the humanoid race. In September 2025, it raised over US$1 billion in a Series C round at a US$39 billion valuation with backers such as Nvidia, Qualcomm and Salesforce.

Figure 3: Evolution of Figure humanoids (Dreamforce 2025)

Source: Salesforce – Dreamforce 2025

Figure has also secured a notable commercial agreement. In January 2024 it signed a deal with BMW to bring its robots into automotive production. Reporting has been mixed on how much useful work the robots have actually been doing since the announcement. However, CEO Brett Adcock recently said that a robot has been operating autonomously for 10-hour shifts over the past five months and has now reached operational readiness.

Adcock had initially planned to focus on commercial/industrial applications before tackling domestic use. Over the past year however, his view has shifted and he now sees the home as a solvable challenge within a few years, possibly by 2026. He also insists they will not go to market using “silly” teleoperations like his competitors. Adcock also emphasised that adding a consumer focus will not come at the expense of their commercial efforts.

Currently, the company is on its third humanoid iteration, Figure 03, which was revealed in October 2025. In a Time profile, they witnessed Figure 03 successfully load items into a dishwasher but struggled with folding T-shirts. However, handling clothes and towels is one of the harder challenges for humanoids, given that fabric changes form upon interaction.

Unlike its predecessors, which were experimental prototypes, Figure 03 is engineered with cost and high-volume manufacturing in mind. Figure’s dedicated manufacturing facility, BotQ, will initially be able to produce around 12,000 robots annually, with a goal of producing 100,000 units in total over the next four years.

Challenges ahead

“You have to … be able to like just talk to it and have it do anything you’d want it to do in unseen locations. That problem is not solved. That problem is 10 times, 50 times, 100 times harder than making a humanoid robot.”

Brett Adcock, Figure AI CEO, October 2025 (Nvidia GTC)

Despite humanoids seeing tremendous progress in recent years, major challenges remain. One persistent issue is the supply chain, as many of the components needed to build an advanced humanoid simply did not exist. This includes powerful motors, specialised sensors and more broadly, components with the durability to withstand continuous operation for many years.

Because a broader ecosystem has never really formed, companies have had to develop and manufacture a large portion of components themselves, forcing heavy vertical integration across the industry. As a result, humanoid companies are effectively building their own supply chains from scratch, a constraint that is likely to weigh on the industry for some time.

A further challenge is building a useful robotic hand and forearm. Several founders have highlighted that the hand is the hardest part of the humanoid to design because humans rely on them for almost every task and they require a huge range of precise, coordinated movements. Without a truly capable hand, even advanced humanoids will struggle with a wide range of basic tasks.

Beyond hardware, humanoid robots also face regulatory uncertainty, particularly for domestic applications. Governments will need to establish safety standards, liability frameworks and potentially certification processes before widespread home deployment can occur. Data privacy and cybersecurity present further concerns as robots with cameras, microphones and internet connectivity operating in homes could become targets for hacking or surveillance, raising questions that manufacturers have yet to fully address publicly.

Perhaps the most significant challenge, however, is developing general-purpose robotic systems. Adcock views this as the primary bottleneck, far outweighing manufacturing constraints. A key limitation today is the scarcity of high-quality real-world data for training, which companies are now trying to address in different ways. Figure plans to allocate much of its recent US$1 billion funding to hiring humans for first-person video data collection, while 1X intends to gather much of its data from its early-access Neos shipping in 2026.

However, experts question whether feeding models more data alone will suffice. Meta’s Chief AI Scientist and renowned ML expert, Yann LeCun, has warned that current approaches may excel at specific tasks but fall short of true generality (the ability to handle open-ended tasks and unfamiliar situations) without fundamental breakthroughs:

“The big secret of the industry is that none of those companies has any idea how to make those robots smart enough to be useful. Or I should say, smart enough to be generally useful.”

Yann LeCun, Meta Chief AI Scientist, October 2025 (MIT)

Legendary programmer John Carmack echoes this scepticism:

“I am more skeptical than a lot of people in the tech space about the near term utility of humanoid robots. Long term, driven by AGI, sure, they are going to be an enormous economic engine, but business plans that have them making a dent in the next five years seem unlikely.”

John Carmack, November 2024 (X)

The founders themselves remain more optimistic. Musk has highlighted a potential breakthrough threshold that he thinks Tesla can achieve, where Optimus would learn new tasks simply by watching YouTube or how-to videos, much like a human. Meanwhile, Adcock and Børnich believe fully autonomous general-purpose humanoids are just two years away. Time will tell whether more data can overcome today’s limits, or if progress stalls until deeper AI breakthroughs arrive.

The humanoid market

“There will be more humanoids on the planet than there are people. There’s absolutely no doubt about that. At least everyone wants a humanoid, but you want way more than that.”

Bernt Øyvind Børnich, 1X CEO, October 2025 (The Casey Adams Show)

CEOs of humanoid companies forecast massive TAMs, expecting billions of units in the long run. Adcock sees this as a roughly US$40 trillion opportunity, essentially all the labour in the current global economy. These companies attribute this scale to broad adoption across many sectors, from industry to the home, driven by the promise of cheap, flexible labour. Demand will also be supported by the ageing population, as labour shortages intensify and humanoids are needed to assist with elderly care.

Yet ubiquity will take time. Even if general-purpose humanoids arrive tomorrow, creating the infrastructure to manufacture billions of units will be challenging and likely encounter multiple issues that need to be solved. This includes potential regulatory hurdles and component shortages (e.g. chips). Additionally, most consumers currently cannot afford a US$20,000 robot and therefore prices would need to come down or income per capita would need to rise substantially.

Morgan Stanley estimates that by 2050 there will be nearly 1 billion humanoids, with 90% in industrial and commercial use cases, representing a US$4.7 trillion market.

Figure 4: Long-term forecast of humanoid revenue

Source: Morgan Stanley Research

This is similar to Bank of America’s forecast, which expects just over 1 billion humanoids by 2050 and 3 billion by 2060. However, unlike Morgan Stanley, it expects household robots to make up the majority of units. This reflects the uncertainty of how the industry will evolve.

Figure 5: Long-term forecast of humanoid units

Source: Bank of America Research

“What matters is shipping a product at scale that can generate the data to increase intelligence and reduce cost. That’s who wins in my mind. Like who’s the first through a million robots in market? That’s a big marker that will help determine like the leader.”

Brett Adcock, Figure AI CEO, November 2025 (WTF Online – Nikhil Kamath)

What also remains unclear is the future market structure, whether it will be fragmented or dominated by a few players. Many factors will shape the outcome, though one key factor could be a data moat. Adcock has argued that humanoid robotics could become a natural monopoly, as each additional robot deployed generates real-world data that improves all others. This creates a powerful flywheel: the leader in deployed units gains a growing data advantage, enabling smarter robots, driving more sales and widening the gap with competitors.

Yet this outcome is not guaranteed, as it depends on whether additional data continues to yield meaningful improvements or eventually flattens out. Future models may also require less data to learn, which could reduce the advantage of scale.

“We are not in a race against China… it doesn’t matter if China makes a million humanoid robots tomorrow. They just don’t work very well to be frank.”

Brett Adcock, Figure AI CEO, November 2025 (WTF Online – Nikhil Kamath)

Geographic leadership is also debated. Many have argued that China will have a distinct advantage due to its manufacturing capacity. Adcock downplays this, arguing that Chinese humanoids are nowhere near Figure’s capabilities and that manufacturing is not the main bottleneck. He also argues that manufacturing robots will be significantly easier than manufacturing cars as he sees humanoids as being more akin to consumer electronics products.

However, Tesla’s own well-documented manufacturing challenges with vehicles, despite Musk’s expertise, and acknowledging the complex nature of humanoid systems, suggests that scaling production to millions of units annually may prove more difficult than Adcock anticipates, particularly given the need for vertical integration across novel supply chains.

Ultimately, we see humanoid robots becoming a multi-trillion-dollar market, but forecasting unit volumes or market share with any precision remains difficult given the uncertainty around future AI breakthroughs. However, much of the current fog of war should begin to lift in 2026 as companies move from prototypes into mass production and robots start appearing more frequently in real-world settings. At that point, we will gain a clearer sense of how the market is likely to evolve.

Conclusion

Humanoid robotics stands on the brink of shifting from science fiction to economic reality. The abundance of cheap labour would be transformative to the global economy, substantially reducing the cost of goods and services, with some like Musk believing it could even abolish poverty. Yet the path ahead remains steep and uncharted. True general-purpose autonomy is still unsolved and the manufacturing challenge will be immense. However, the companies leading this charge are well capitalised and technically formidable. Over the next five to ten years, humanoid robotics will likely be one of the most exciting fields in technology, with rapid iteration and fierce competition that could ultimately give rise to the next generation of economic giants.

At AlphaTarget, we invest our capital in the most promising disruptive businesses at the forefront of secular trends; and utilise stage analysis and other technical tools to continuously monitor our holdings and manage our investment portfolio. AlphaTarget produces cutting-edge research and our subscribers gain exclusive access to information such as the holdings in our investment portfolio, our in-depth fundamental and technical analysis of each company, our portfolio management moves and details of our proprietary systematic trend following hedging strategy to reduce portfolio drawdowns. To learn more about our research service, please visit alphatarget.com/subscriptions/.

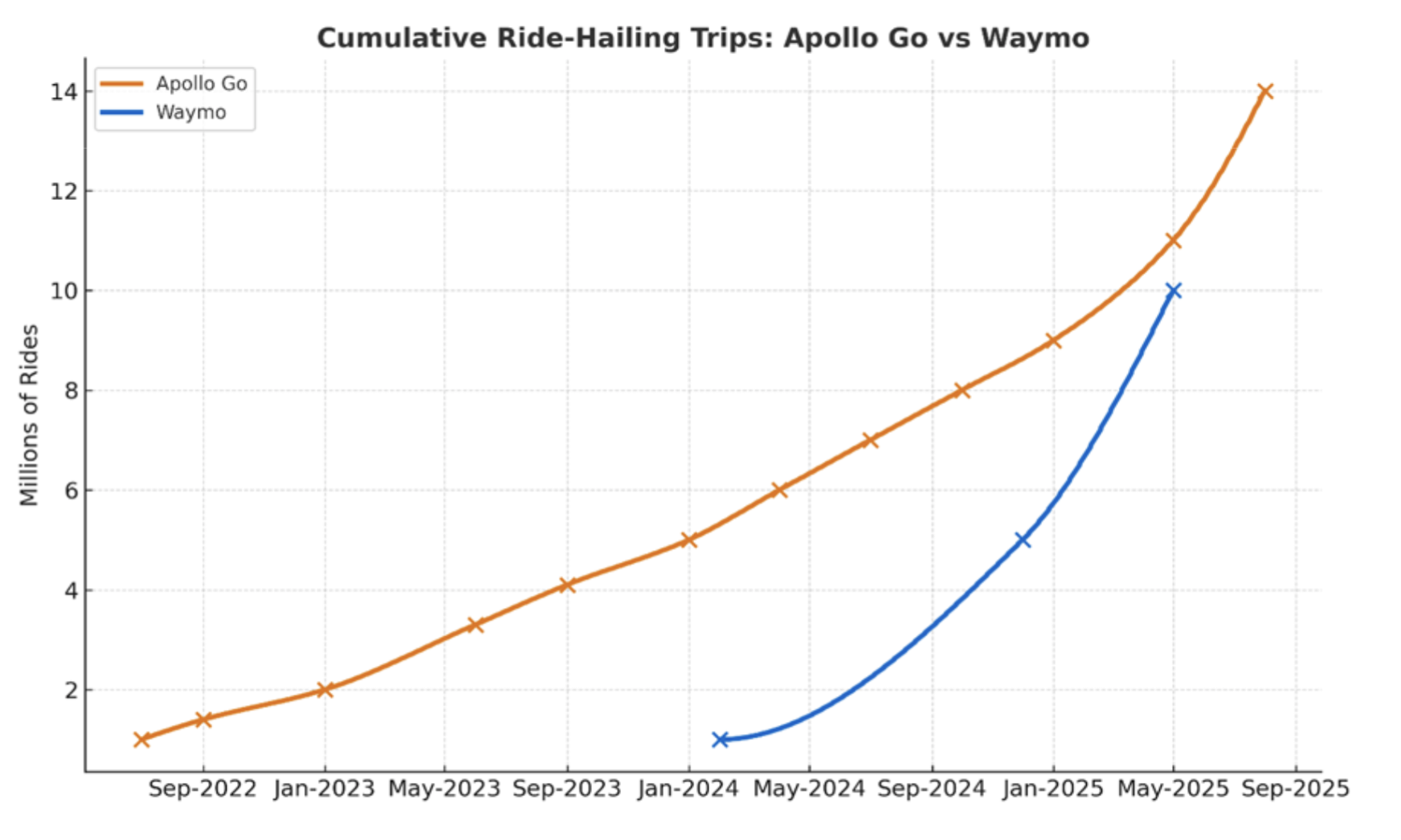

In 2025, the autonomous vehicle (AV) race accelerated sharply. Waymo’s weekly rides increased more than fivefold in just under a year, putting it neck and neck with China’s Apollo Go in cumulative autonomous miles. Meanwhile, Tesla launched its robotaxi pilot mid-year and is currently gearing up for mass production of its Cybercab, targeting 2 million units annually.

In our previous note, Driving Towards Autonomy (2024), we provided a primer on the autonomous vehicle space. This report builds on that foundation, examining key developments over the past year. We explore the surge in autonomous ride-hailing, expanding partnerships, unit economics and why we believe the AV sector is now at an inflection point.

Autonomous ride-hailing surge

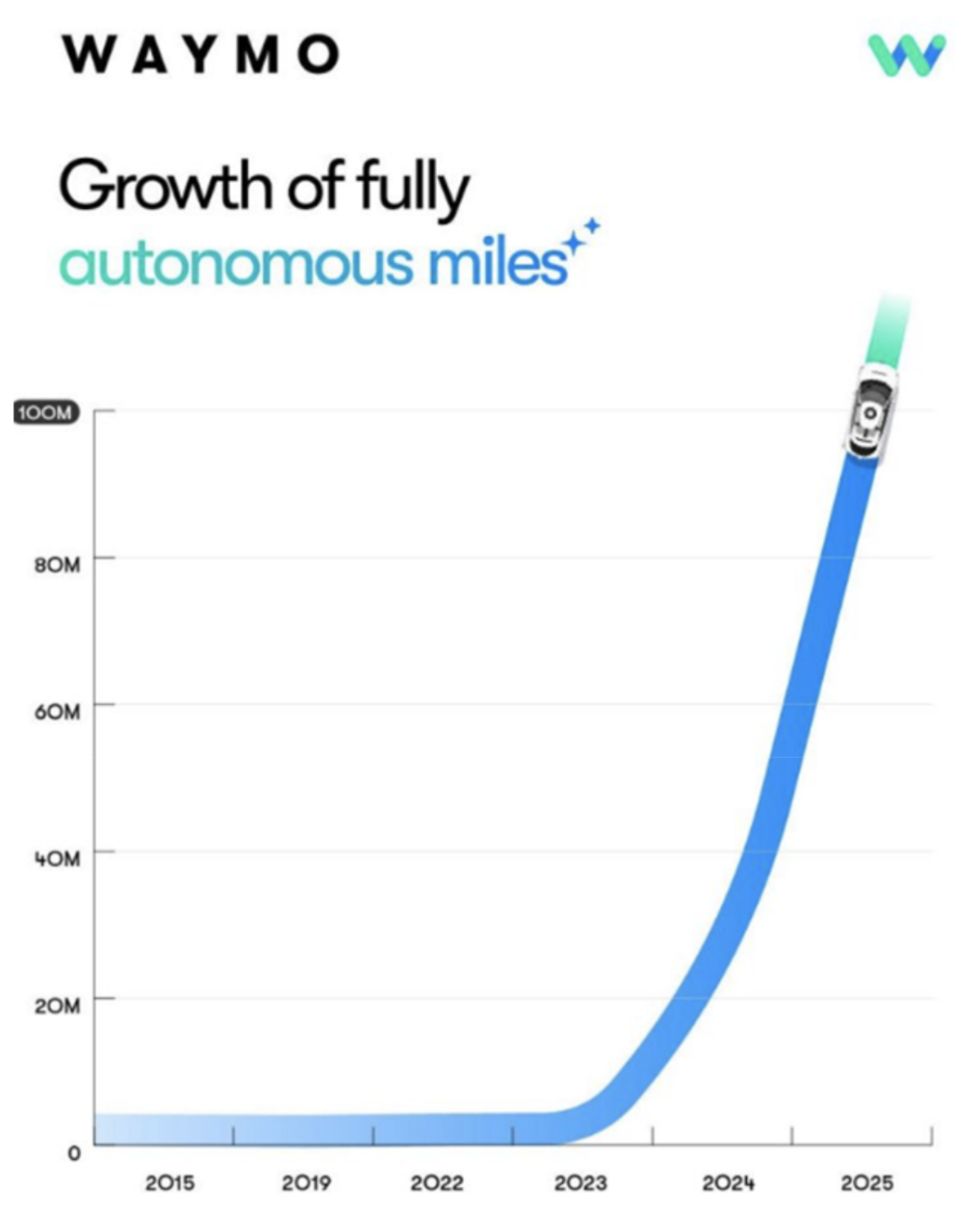

Ride-hailing has seen strong momentum over the last 12 months. Waymo’s cumulative autonomous miles reached 100 million in July 2025 (Figure A), supported by more than 250,000 trips per week across five major US cities and a fleet of over 1,500 vehicles.

Figure A. Waymo’s cumulative autonomous miles

Source: Waymo, X