Introduction

“There's this bizarre 10x per year growth in revenue that we've seen... you would think it would slow down, but we added another few billion to revenue in January. ”

Dario Amodei, CEO of Anthropic, February 2026 (Dwarkesh Podcast)

Anthropic, the creator of the Claude AI models, has seen unprecedented growth in recent years. In early April 2026, its annualised revenue run-rate surpassed US$30 billion, up around 30x since January 2025. This puts it roughly in line with its major rival OpenAI, though Anthropic continues to grow at a materially faster pace.

Much of Anthropic’s success has been driven by Claude’s strength in coding and enterprise workflows. However, the company now faces several hurdles to overcome which will shape its next phase, including challenging unit economics and the path to self-funding profitability. Furthermore, Anthropic appears to be increasingly compute constrained, with some cracks already beginning to emerge. Last but not least, competition is intensifying, with OpenAI appearing better positioned on the compute front, while Chinese open-weight models continue to rapidly advance.

In this note, we first provide an overview of Anthropic’s products and models and discuss the drivers of its success. We then examine future growth hurdles, assess Anthropic’s competitive position against OpenAI and explore the broader competitive landscape. Finally, we discuss how investors can gain exposure to the company today through public-market proxies.

Claude

Claude is Anthropic’s core AI platform, available through a chatbot and a growing range of dedicated products. It is also offered via an API, allowing its models to be integrated into other applications and workflows. Users can choose between a free tier, a Pro subscription at US$20 a month or Max plans starting at US$100 a month, while API usage is priced separately on a per-token basis.

Much of Anthropic’s product expansion has centred on agentic offerings. Claude Code, which became generally available in May 2025, is an agentic coding tool designed to help developers complete complex tasks more autonomously. Claude Cowork, which became generally available in April 2026, is aimed at non-technical users and is designed to handle multi-step tasks across a user’s computer, files and applications. Anthropic has continued to roll out new products and features at a rapid pace, spanning everything from design and productivity tools to developer infrastructure.

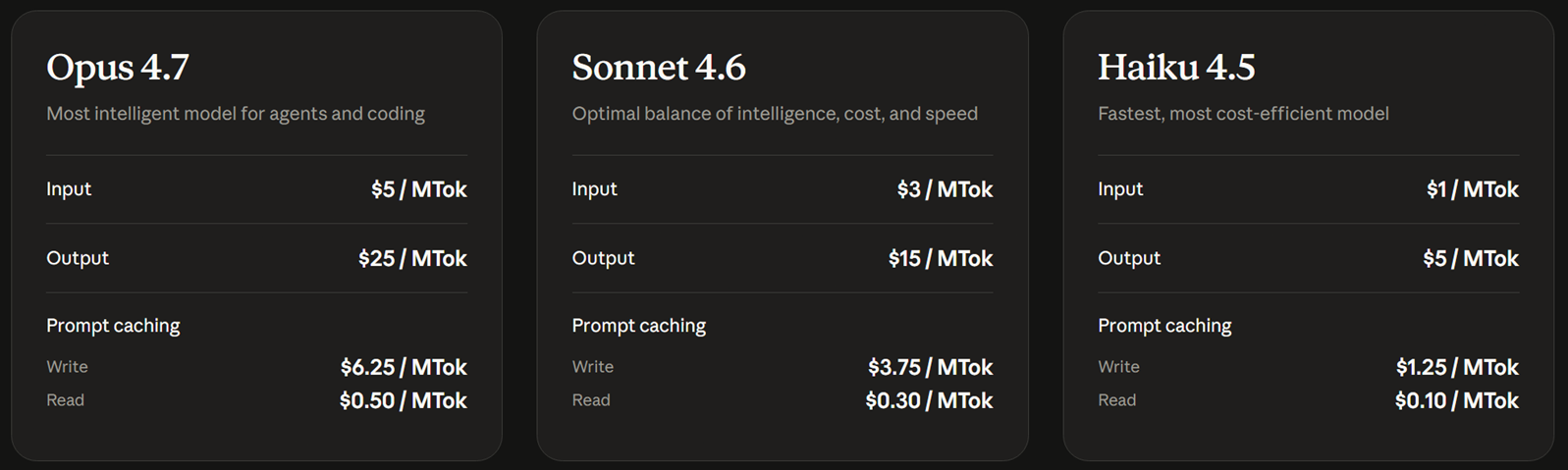

The company also offers a tiered model lineup. Opus 4.7 is the most capable broadly available model and is positioned for the most complex coding and reasoning tasks (Figure 1). Sonnet 4.6 offers the best balance of speed and intelligence, while Haiku 4.5 is the fastest and most cost-efficient option for lighter or high-volume workloads.

Figure 1: Claude Models via API – Intelligence vs Speed vs Cost

Source: Anthropic

Anthropic’s Rise

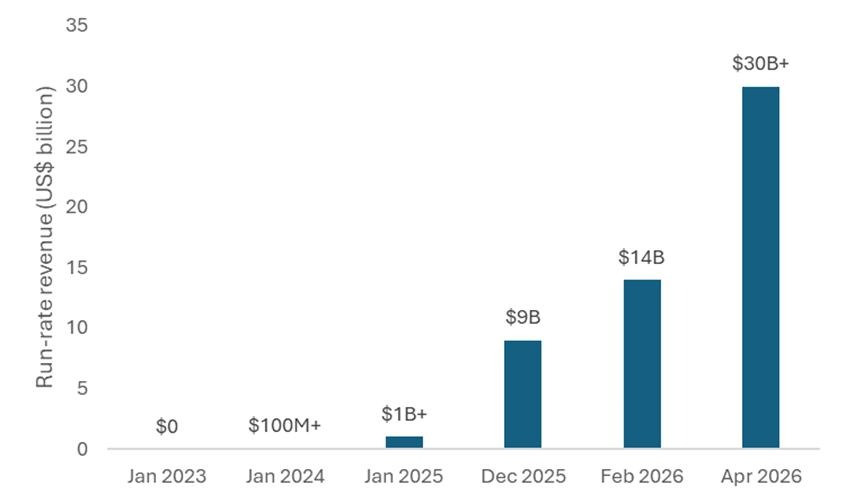

Anthropic was founded in early 2021 by a group of former OpenAI employees led by siblings Dario and Daniela Amodei. It initially operated largely out of the spotlight, but that changed as Claude gained significant traction. This was reflected in its rapid revenue growth, with its annualised run-rate increasing by roughly 10x in both 2024 and 2025 before surpassing US$30 billion by early April 2026 (Figure 2).

Figure 2: Anthropic’s exponential run-rate revenue growth

Source: Anthropic

Anthropic’s success has been driven by a confluence of factors. A primary driver was its early focus on coding, where it regularly topped benchmarks. Coding turned out to be a task where AI models particularly excelled, given the huge amount of training data available and the clear feedback loops. This enabled large productivity gains, which in turn drove significant demand from developers within enterprises.

User experience has been another key advantage. Anthropic lead engineer, Felix Rieseberg, recently argued on The MAD Podcast that raw model performance alone is often not enough to win a market. He pointed to Claude Code as an example: by embedding Claude directly into the terminal, Anthropic made the model more accessible and more deeply integrated into developers’ existing workflows, which helped boost adoption.

Broader availability has also helped. Anthropic has been the only frontier-model company to make its models available across all three major cloud platforms: AWS, Google Cloud and Azure. Anthropic also benefited from the viral rise of OpenClaw, an agent platform first released in November 2025 (under the name Clawd) and powered by Claude, which drove further demand.

Anthropic’s brand has also been a key asset. We have come across many anecdotes of enterprises choosing Anthropic because it is seen as more reliable and trustworthy than its competitors. Additionally, Business Insider reported in early March 2026 that Claude saw an influx of users following Anthropic’s stance against the Pentagon’s proposed use of its models. Many of those new users were defectors from ChatGPT, as OpenAI had subsequently agreed to deploy its models for the Department of War.

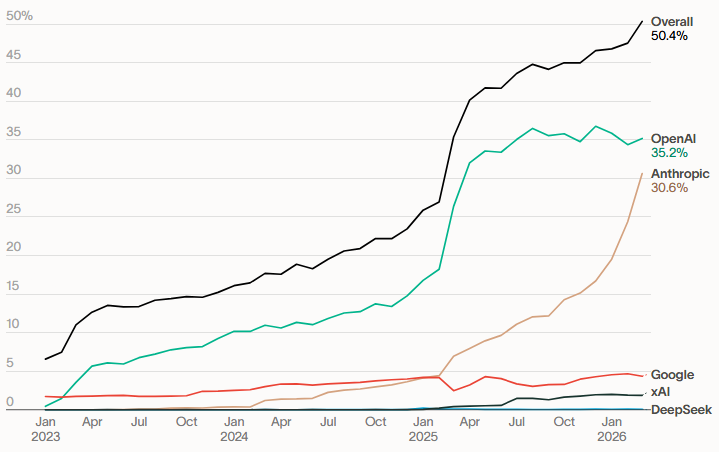

Figure 3: Model Adoption – Share of U.S. businesses with paid subscriptions to AI models, platforms and tools

Source: Ramp

The commercial base remains heavily enterprise-oriented. In October 2025, Reuters reported that, according to an Anthropic spokesperson, roughly 80% of the company’s revenue came from businesses. On 12 February 2026, Anthropic said the number of customers spending more than US$100,000 annually on Claude had grown 7x in the past year, while eight of the Fortune 10 were already customers. It also said that more than 500 customers were spending over US$1 million annually. By 6 April 2026, that figure had exceeded 1000, doubling in less than two months.

Looking Ahead

Anthropic is now trying to build on its enterprise foothold by broadening adoption with Claude Cowork. Early signs appear encouraging, with Bloomberg reporting in April 2026 that, according to Anthropic’s Chief Commercial Officer, Cowork had seen stronger adoption in its first few weeks than Claude Code did over a comparable period a year earlier. The executive also suggested Cowork could ultimately reach a broader market than Claude Code, noting that engineers typically account for only 2% to 5% of staff at a large company, while Cowork is designed to appeal to a much wider group of non-technical users.

“There is something both impressive but also slightly terrifying about seeing a model that is so much smarter than the last model.”

Felix Rieseberg, engineering lead for Claude Cowork & Claude Code Desktop, April 2026 (The MAD Podcast)

Anthropic is also continuing to make strong progress at the model level. Opus 4.7, which was released in mid-April, was a solid improvement on Opus 4.6. Earlier that month, Anthropic claimed to achieve an even bigger leap with Mythos, a general-purpose frontier model that people within the company described as a “GPT-3 moment.” However, they discovered that it had outsized capabilities in cybersecurity with far-reaching implications for the safety of software and infrastructure. As such, Anthropic does not plan to make Mythos generally available, reflecting its view that releasing a model with these capabilities into the public domain today would be too dangerous.

Instead, Anthropic is providing limited access to a select group of organisations through Project Glasswing, an invitation-only programme for defensive cybersecurity work. The programme is intended to help organisations responsible for critical software and infrastructure strengthen their defences and prepare for a future in which more powerful models with Mythos-like capabilities become more widely available. Many have applauded Anthropic for acting responsibly by choosing not to commercialise the model more broadly. Others have argued the risks were overstated and that the move was more of a marketing exercise. Some have also suggested that, given Anthropic’s current compute constraints, it may not have had the capacity to support broader commercial deployment, with Mythos in its current form costing 5x as much per token compared to Opus 4.7.

Nevertheless, these developments show that the core opportunity is expanding on two fronts: models continue to improve rapidly in both capability and real-world relevance, while products like Claude Cowork make those capabilities accessible to far more users. This combination should sustain demand growth well beyond current levels.

The Growth Tightrope

“The amount of compute the industry is building this year is probably, call it, 10-15 gigawatts. It goes up by roughly 3x a year. So next year’s 30-40 gigawatts. 2028 might be 100 gigawatts. 2029 might be like 300 gigawatts.”

Dario Amodei, CEO of Anthropic, February 2026 (Dwarkesh Podcast)

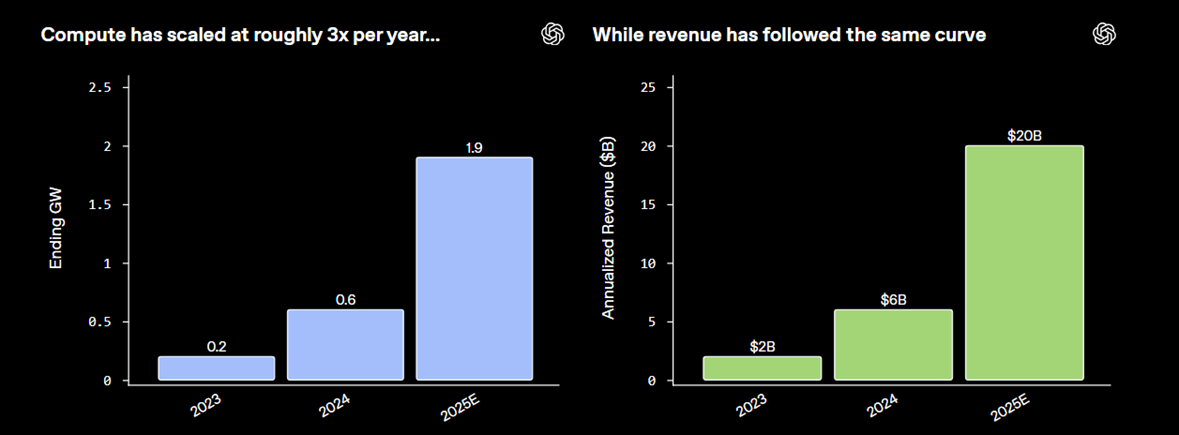

Although Anthropic’s exceptional growth rate is expected to continue, the rate of growth remains highly unpredictable. This is because growth is not just a question of demand, but it also depends on how much compute the company commits to in advance. As outlined in a January 2026 OpenAI blog, compute capacity (GW) and run-rate revenue are highly correlated, with both tripling in 2024 and 2025 and each GW of compute translating into roughly US$10 billion of run-rate revenue (Figure 4). While OpenAI is not a perfect proxy for Anthropic, the chart illustrates the extent to which AI revenue growth is dependent on compute availability.

Figure 4: OpenAI revenue and GW correlation

Source: OpenAI

Compute availability is only one side of the equation; the margin on each dollar of revenue is the other, and here Anthropic's position is more nuanced. In January 2026 it was reported that Anthropic had revised its 2025 gross margin projection down from 50% to roughly 40%, after inference costs on third-party clouds came in higher than internally forecast. This is no doubt a sharp improvement from a gross margin of negative 94% in 2024, but leaves the company well short of the 77% gross margin that management is reportedly targeting for 2028. Obviously, a gigawatt of compute generating revenue at 40% margins is a very different business to the same gigawatt at 77% margins, and much of Anthropic's current valuation rests on how convincingly that margin ramp can be delivered.

Earlier in the year, Amodei noted that Anthropic was aiming to maintain or even exceed its 10x annual growth rate, though he thought realistically that this trajectory could begin to bend somewhat in 2026. So far, that bending has not materialised. By early April 2026, the run rate had more than tripled in just over three months, implying annualised growth closer to 100x. However, most expect a run-rate of US$80-100 billion by year end.

Even so, Amodei pointed out that a 10x growth rate is not sustainable over longer time periods. This is because each GW of compute is estimated to cost roughly US$50 billion in data centre CAPEX, and therefore the level of investment required to sustain 10x revenue growth is too risky. The danger is that even a slight shortfall in demand and revenue could lead to bankruptcy. As he put it: “If my revenue is not US$1 trillion, if it’s even US$800 billion, there’s no force on Earth, there’s no hedge on Earth that could stop me from going bankrupt if I buy that much compute.” This risk is compounded by the fact that Anthropic is not yet self-funding at the operating level; the margin ramp has to materialise alongside the compute commitment for the overall financial structure to hold together.

Amodei assumes that industry AI compute capacity will continue to roughly triple annually, reaching ~100 GW by 2028. This is broadly in line with forecasts from analysts such as Morgan Stanley, who project 126 GW by then. If that 3x pace continued into 2029, it would imply roughly 300 GW and a staggering US$3 trillion in industry revenue. Anthropic's own share of that market would depend to a large extent on its ability to secure enough capacity to keep its models at the frontier while simultaneously serving customer demand.

Anthropic vs OpenAI

On 31 March 2026, OpenAI said in a press release that it was generating US$2 billion of revenue per month, which annualises to ~US$24 billion. That is around US$6 billion below Anthropic’s reported run-rate on a headline basis. However, the gap may be narrower than it appears. Multiple reports have said that Anthropic presents its revenue on a gross basis, meaning its headline figure does not deduct the revenue share paid to cloud partners, whereas OpenAI is said to report revenue on a net basis. Therefore, with Anthropic’s revenue share estimated at between 5% and 27%, the two companies appear to be operating at broadly similar run-rate levels.

However, Anthropic’s growth trajectory is clearly ahead, as OpenAI “only” grew ~20% over the past 3 months, versus Anthropic’s growth of ~233%. Furthermore, Anthropic has higher revenue quality because the majority of its customer base is enterprise. On this basis, it would appear that Anthropic is now in the dominant position. Nevertheless, OpenAI could still mount a meaningful comeback.

OpenAI’s Codex, a rival to Claude Code, is now broadly competitive, with more than 2 million weekly users and growth of more than 70% month-on-month as of 31 March 2026. Additionally, enterprise now contributes more than 40% of revenue and is on track to reach parity with consumer revenue by the end of 2026. OpenAI’s management also argues that its consumer reach of 900 million weekly users gives it a distribution edge, with familiarity in daily life helping drive adoption at work. Hedge fund manager Brad Gerstner also recently predicted that OpenAI’s imminent new model, Spud, would drive an inflection in revenue.

“I think it is true we’re spending somewhat less than some of the other players… I get the impression that some of the other companies have not written down the spreadsheet, that they don’t really understand the risks they’re taking.”

Dario Amodei, CEO of Anthropic, February 2026 (Dwarkesh Podcast)

Compute, however, is the area where the competitive dynamic is most contested. Dylan Patel of SemiAnalysis has argued that Anthropic’s cautious approach has left it at a relative disadvantage, while OpenAI was willing to “just sign these crazy deals”. He added that Anthropic has now had to turn to “lower-quality providers that they would not have gone to before”, noting that Anthropic historically enjoyed access to the best quality providers like Google and Amazon. The framing is provocative but warrants more nuance. Dylan’s observation centres on Anthropic needing to diversify beyond its traditional premium partners into newer or smaller suppliers to address its capacity shortfall. However, this does not diminish the strategic value of Anthropic’s deep, co-designed partnership with Amazon on custom Trainium silicon, which stands as a high-quality, deliberate choice rather than a concession to inferior options.

Trainium does not match Nvidia's latest GPUs across every dimension. The software ecosystem around CUDA is more mature, and Nvidia retains the edge on flexibility for cutting-edge research workloads. However, Trainium has evolved into a genuine co-design programme between Anthropic and AWS: Anthropic is now running Claude on more than one million Trainium2 chips via Project Rainier, and the two companies' engineering teams are shaping how Anthropic will use Trainium4 and beyond. Furthermore, Trainium’s memory-bandwidth-per-dollar advantage is particularly well-suited to reinforcement learning workloads. Together with Google DeepMind, Anthropic is now one of only two frontier labs with meaningful hardware-software co-design integration.

We can hypothesise that Anthropic has accepted a different set of tradeoffs: less software flexibility and a thinner research-workload ecosystem in exchange for a better cost-per-token profile on inference, which is where most of the volume and likely margin dollars sit. For a company whose path to a 77% gross margin depends on inference economics rather than training flexibility, that is arguably an advantage rather than a compromise.

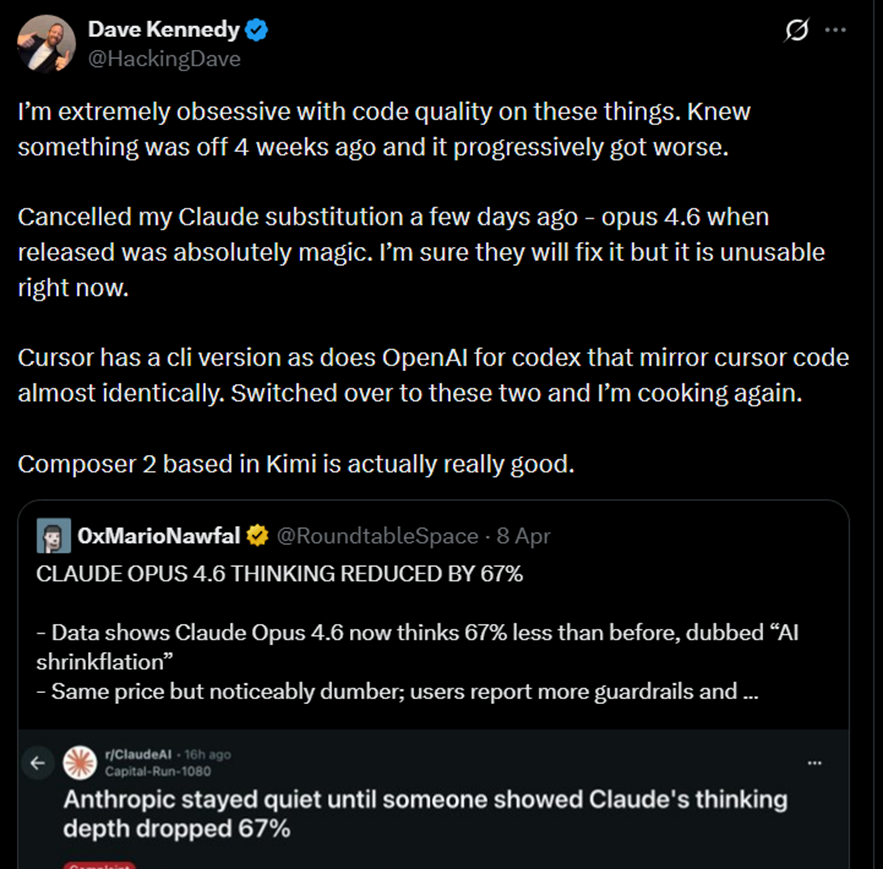

That said, capacity constraints are a separate and more immediate issue. The pressure has been acknowledged by Anthropic lead engineer, Felix Rieseberg, who recently said that the overwhelming demand for the company’s products remained a challenge. In early April, Anthropic cancelled subscription-based support for third-party tools such as OpenClaw, with a spokesperson saying they placed an “outsized strain” on the company’s systems. Such tools are now supported only via the API. There has also been mounting frustration among Claude users, with many claiming that output quality has recently deteriorated significantly. One of the most high-profile complaints came from AMD’s AI Director, who reportedly said that “Claude cannot be trusted to perform complex engineering tasks.”

Although the cause of the deterioration remains disputed, with some pointing to a product update, we think it is highly plausible that this reflects capacity constraints following the recent surge in demand. Many high-profile online personalities, as well as power users we spoke with, are now moving to competitors, at least temporarily, despite the costs and frictions involved in switching providers (Figure 5). However, it remains unclear whether these examples point to a broader migration or a more limited reaction among certain users.

Figure 5: High-profile Claude user cancelling his subscription due to degraded output quality

Source: X

Although OpenAI is currently well placed to capture some of Anthropic’s momentum, it would be premature to forecast a winner. Anthropic retains a strong brand, deep enterprise traction and highly regarded models. It is also taking steps to address its weakness in compute, notably through a deal announced with Amazon on 20 April 2026 that secures up to 5 GW of capacity to train and deploy Claude. Anthropic also said that significant new Trainium2 capacity would begin coming online in Q2 2026, with nearly 1 GW of combined Trainium2 and Trainium3 capacity expected by the end of 2026. Under the agreement, Anthropic also committed more than US$100 billion over the next decade to AWS technologies, while Amazon invested US$5 billion in Anthropic, with up to a further US$20 billion available over time. Earlier in the month, Anthropic also secured approximately 3.5 GW of additional capacity through Broadcom and Google, with that capacity expected to begin coming online in 2027. Taken together, these agreements should help Anthropic ease some of its near-term capacity pressure while also expanding its longer-term capacity base.

Beyond compute, both OpenAI and Anthropic are expected to soon release their next-generation models, which could swing momentum in either direction. Additionally, both companies face legal overhangs whose ultimate impact remains uncertain, with Anthropic still contesting a Pentagon supply-chain-risk designation and OpenAI heading into trial in Elon Musk’s case over its restructuring. In sum, with compute, product releases and legal outcomes all still in flux, the race remains far from over.

The Broader Competitive Landscape

The Anthropic-OpenAI competition captures the frontier-lab dynamic but understates the pressure coming from two other directions: rapidly advancing Chinese open-weight models, and vertically integrated competitors with advantages across the stack.

The open-weight gap is closing faster than what most commentators acknowledge. Take Moonshot AI's Kimi K2.6, for example, which was released in April 2026. On the industry's most-watched coding benchmark, SWE-Bench Verified, Kimi is at parity versus Opus, while being priced at a fraction of the cost. It also supports native orchestration of up to 300 parallel sub-agents. Kimi is one of several Chinese open-weight models nipping at the heels of frontier labs, alongside Z.ai's GLM-5.1 and DeepSeek V4, and the cadence of releases has been relentless.

Benchmark parity is one, albeit important, dimension although factors such as long-horizon agentic reliability, polish of tooling, depth of first-party tooling (e.g., Claude Code & MCP), and safety alignment play an equally important role.

Additionally, there is the geopolitical dimension. Chinese open-weight models face real headwinds in US regulated enterprise, where procurement rules, data-sovereignty concerns and export considerations favour domestic providers. However, the rest of the world is largely open territory, and several buyers (e.g., European finance, healthcare, non-US government) increasingly have reasons to prefer self-hostable open weights on their own infrastructure over any frontier-lab API, including Anthropic's.

The pressure from vertically integrated players with huge distribution advantages is also real. Google runs frontier models on its own TPUs, distributes through Workspace and Android, and owns the underlying cloud, a stack that is difficult to match on unit economics alone. xAI has moved aggressively on compute, with the Colossus cluster giving it scale that few pure-play labs can match. Meta continues to anchor the open-weight ecosystem through Llama, which, even if it does not lead on benchmarks, exerts downward pressure on pricing across the industry.

Ultimately, Anthropic’s moat rests on its software layer, namely Claude Code, Cowork, MCP, and the embedded workflows these products create. The durability of those embedded workflows, rather than any specific benchmark lead, is arguably the more important long-term moat to watch.

Investing in Anthropic

While Anthropic remains private, a range of listed companies hold stakes in it, giving public-market investors indirect exposure. Among the most closely watched are Zoom and SK Telecom, whose holdings in Anthropic are meaningful relative to their market capitalisations.

Zoom invested in Anthropic’s Series C in May 2023. The total raise was US$450 million and Reuters reported that the valuation was nearly US$5 billion. Although we do not know the exact amount Zoom invested, the company disclosed that during that period it had made US$51 million of “strategic investments in equity securities of private companies.” Some analysts believe all or the vast majority of this investment was in Anthropic. If true, that would imply a roughly 1% ownership stake before dilution from future rounds. More recently, Zoom’s CFO also said in an investor call that the company’s balance sheet included a US$1.6 billion line item “of which the most significant portion is Anthropic” for the quarter ending January 2026. The company also recorded a pre-tax gain of US$532 million, which predominantly reflected its Anthropic stake. At that point in time, Anthropic’s most recent funding round was its Series F, which valued the company at US$183 billion post-money.

The other popular market proxy, SK Telecom, announced a US$100 million investment in Anthropic in August 2023. This was in addition to a previous amount it had invested, though we are unaware of the size of that earlier investment. Anthropic’s valuation was also not disclosed at the time of the US$100 million investment, though some speculate that it was at a similar valuation to the Series C of nearly US$5 billion.

Both holdings have become increasingly important to the equity story for Zoom and SK Telecom as Anthropic’s valuation has continued to rise. In its 12 February 2026 Series G funding round, Anthropic was valued at US$380 billion post-money, having raised US$30 billion. Bloomberg later reported in mid-April that the company had attracted investor interest at a valuation of US$800 billion. OpenAI’s 31 March 2026 US$122 billion funding round, which valued it at US$852 billion post-money, also provides a useful reference point given that the two companies are now operating at broadly similar annualised revenue levels. However, Anthropic’s valuation could ultimately surpass OpenAI’s if it maintains its faster growth rate, although that should not be taken for granted given its compute constraints.

Conclusion

Anthropic has built one of the strongest positions in frontier AI through its coding excellence, agentic products, and deep enterprise relationships, driving explosive revenue growth to a ~US$30 billion run-rate. However, the company now faces a more complex test: it must simultaneously scale compute at unprecedented speed, improve gross margins and defend against intensifying competition on multiple fronts from OpenAI, rapidly advancing Chinese open-weight models and vertically integrated players. OpenAI remains formidable, while models such as Kimi K2.6 are achieving benchmark parity on coding tasks while offering lower cost and self-hosting flexibility, while Google, xAI, and Meta apply pressure through distribution and scale. In this environment, Anthropic’s most durable advantage will likely come from the embedded workflows in Claude Code, Cowork, and MCP rather than benchmark leadership alone. The battle for AI dominance is far from over, but it has entered a more demanding phase where execution on compute, unit economics, and product moats will determine the winners.

At AlphaTarget, we invest our capital in some of the most promising disruptive businesses at the forefront of secular trends; and utilise stage analysis and other technical tools to continuously monitor our holdings and manage our investment portfolio. AlphaTarget produces cutting edge research and our subscribers gain exclusive access to information such as the holdings in our investment portfolio, our in-depth fundamental and technical analysis of each company, our portfolio management moves and details of our proprietary systematic trend following hedging strategy to reduce portfolio drawdowns. To learn more about our research service, please visit https://alphatarget.com/subscriptions/.